News

Prof. Tian is awarded the BU ECE Outstanding Teaching Award

11th PhD from Tian Lab: Qianwan Yang

Title: Advancing Computational Multi-Aperture Fluorescence Microscopy via Model- and Learning-Based Techniques

Time: Tuesday, December 2, 2025, 10:00 a.m. – 12:00 p.m.

Advisor: Professor Lei Tian

Committee: Professor Vivek Goyal, Professor Jerome Mertz, Professor Janusz Konrad, Professor Lei Tian

Google Scholar:

https://scholar.google.com/citations?user=R9vLRnYAAAAJ&hl=en

Abstract

Traditional fluorescence microscopy faces inherent trade-offs among resolution, field of view (FOV), and system complexity. Computational Miniature Mesoscope (CM2) addresses this by placing a microlens array (MLA) before a single CMOS sensor: each microlens captures a sub-FOV, and intentional overlap maximizes usable FOV, encoding extended high-resolution scenes on a compact device. This optical simplicity shifts complexity to computation: the periodic MLA induces ill-conditioned multiplexed measurement, and the system exhibits highly non-local, spatially varying PSFs. This dissertation explicitly models that spatial variance (SV) process and solves the associated inverse problem, enabling uniform, wide FOV reconstructions from miniature hardware.

First, I established a low-rank LSV forward model. Sparse 3D PSFs are factorized via truncated singular value decomposition (TSVD) to yield a compact basis, and the corresponding coefficient maps are smoothly interpolated to capture field-dependent variation. Based on this forward model, I pose the inverse problem as a regularized least-squares objective and develop a model-based LSV ADMM solver that improves peripheral fidelity and overall reconstruction quality compared with a linear shift invariant (LSI) ADMM solver. The LSV ADMM solver serves as the reconstruction baseline, while the LSV forward model provides a high-fidelity simulator to generate diverse measurements for training and benchmarking learning-based methods. Building on this foundation, I introduce CM2Net, a multi-stage deep learning framework that combines view demixing, view synthesis, and refocusing-enhancement to perform single-shot 3D particle localization. Trained purely on simulated measurements, CM2Net generalizes to experiments, achieving 27 mm FOV over 0.8 mm depth with 26 μm lateral and 25 μm axial resolution, while delivering 1400× faster runtime and 19× lower peak memory than the iterative baseline. To overcome the locality bias and implicit shift-invariance of conventional CNNs and to generalize reconstruction to extended biological structures, I propose SVFourierNet, which performs global, low-rank deconvolution in the Fourier domain using learned filters that approximate the inverse of the LSV forward model. SVFourierNet attains uniform 7.8 μm resolution across a 6.5 mm FOV and shows interpretable agreement between its effective receptive fields and the physical PSFs. Finally, I introduce SV-CoDe, a scalable SV coordinate-conditioned deconvolution framework. By modulating compact convolutional features with coordinate-driven coefficients predicted by a lightweight MLP, SV-CoDe improves peripheral fidelity and decouples parameter and memory costs from FOV size, enabling SV reconstruction with scalable computation.

Together, the calibrated low-rank LSV model with an LSV–ADMM baseline, CM2Net, SV-FourierNet, and SV-CoDe unify physics and learning to resolve view multiplexing and spatially varying blur, enabling uniform, wide-FOV, high-throughput fluorescence imaging on compact hardware.

10th PhD from Tian Lab: Jeffrey Alido

Congratulations, Jeffrey!

Title: Deep Learning Approaches For Imaging Inverse Problems With Structured Noise

Presenter: Jeffrey Alido

Date: Friday, June 27, 2025

Time: 1:30 pm - 2:30 pm

Location: 8 St. Mary's St. PHO 428

Advisor: Professor Lei Tian

Chair: Professor Tianyu Wang

Committee: Professor Lei Tian, Professor Vivek Goyal, Professor Eshed Ohn-Bar, Professor Kayhan Batmanghelich, Professor Yu Sun (ECE, Johns Hopkins University

Google Scholar Link: https://scholar.google.com/citations?user=zoI7oukAAAAJ&hl=en

Abstract:

Structured and spatially correlated noise presents a major challenge in scientific and biomedical imaging, where idealized assumptions of additive white Gaussian noise often break down. This dissertation addresses this challenge through two frameworks based on deep learning for solving inverse problems with structured noise: a simulation-based supervised learning approach for low signal-to-background ratio (SBR) fluorescence imaging, and a novel generative modeling framework based on Whitened Score (WS) diffusion models for general imaging inverse problems with correlated Gaussian noise.

The first part of this work introduces SBR-Net, a deep neural network trained on synthetic data generated by a structured background noise simulator that models light scattering and structured fluorescent background in thick biological tissue. This approach enables single-shot 3D volumetric reconstruction from light-field microscopy measurements with extremely low SBR. By explicitly modeling structured background noise and simulating realistic measurement–ground truth pairs, SBR-Net learns a direct inverse mapping. The framework is evaluated on synthetic and experimental data, with analysis of generalization behavior under real-world noise mismatch.

The second part introduces Whitened Score (WS) diffusion models, a new class of generative priors tailored to inverse problems with structured noise. Conventional score-based diffusion models, trained on isotropic Gaussian noise, lack inductive biases suitable for real-world noise distributions encountered in applications such as diffraction tomography, interferometry, and wide-field microscopy. WS models reformulate the denoising objective by learning a whitened score function, thus avoiding covariance inversion and enabling training under arbitrary Gaussian forward processes. This formulation allows WS models to serve as strong Bayesian priors, denoising structured noise and consistently outperforming conventional diffusion models across a range of computational imaging tasks.

These contributions highlight the importance of aligning priors with real-world data and incorporating physical models and domain-specific noise characteristics to address inverse problems in realistic imaging settings. By combining simulation, supervised learning, and generative modeling, this work offers robust, interpretable solutions under structured and spatially correlated noise conditions.

9th PhD from Tianlab: Jiabei Zhu

Congratulations to Dr. Jiabei Zhu.

I am particularly proud that the method developed during the final stage of Jiabei’s thesis has already demonstrated real-world impact through its application in his current company’s industrial projects. It is rare to produce work that holds both academic significance and immediate practical value.

As a research group, I aspire for us to pursue work that is not only intellectually compelling but also genuinely useful—beyond the pursuit of glamorous publications.

ECE PhD Dissertation Defense: Jiabei Zhu

Wednesday, April 23, 2025

12:30 p.m. – 2:00 p.m.

Location: 8 St. Mary’s Street (PHO), Room 339

Dissertation Title:

“Advancing Intensity Diffraction Tomography with Multiple Scattering Models in Transmission and Reflection Systems”

Advisor: Professor Lei Tian

Chair: Professor Ji-Xin Cheng

Committee:

-

Professor Lei Tian

-

Professor Luca Dal Negro

-

Professor Michelle Sander

-

Professor Ulugbek Kamilov

Abstract

Intensity Diffraction Tomography (IDT) enables label-free, 3D quantitative phase imaging using simple hardware like a standard microscope with programmable LED illumination. While cost-effective and stable, conventional IDT relies on simplified models that limit its accuracy for complex, multiple-scattering samples common in biology and materials science. This dissertation focuses on developing robust computational methods to overcome these limitations and expand IDT’s applicability.

Key contributions include:

-

The development and implementation of an efficient non-paraxial multiple-scattering forward model capable of accurately simulating light propagation in thick, strongly scattering samples under high-NA illumination.

-

Advanced reconstruction algorithms, incorporating both model-based optimization and deep learning strategies, to solve the challenging inverse scattering problem, yielding improved 3D refractive index maps.

-

The introduction and validation of reflection-mode IDT for imaging samples on reflective surfaces, extending the technique to industrial inspection and metrology. This involved adapting the multiple-scattering framework for reflection geometries, creating specialized reconstruction and calibration techniques, and demonstrating high-resolution volumetric imaging of complex multi-layer structures.

These advancements significantly enhance IDT’s ability to quantitatively image complex samples in both transmission and reflection modes, broadening its impact across biomedical research and industrial applications while preserving its inherent simplicity and stability.

Boston University Provost’s Scholar-Teacher of the Year Award

Prof. Lei Tian is the recipient of Boston University Provost’s Scholar-Teacher of the Year Award 2025.

8th PhD from Tian lab: Chang Liu

Date: Wednesday, April 9

Time: 10:30 am

Location: 8 St. Mary’s Street, Room 339 (PHO 339)

Title: “Pushing the Limits of SNR and Resolution for In Vivo Neural Imaging via Self-supervised Learning”

BME PhD Dissertation Defense: April 9, 2025, Chang Liu

Advisory Committee:

Lei Tian, PhD – ECE, BME (Advisor)

Jerome C. Mertz, PhD – BME, ECE, Physics (Chair)

Jerry L. Chen, PhD – Biology, BME

Michael N. Economo, PhD – BME

Kayhan Batmanghelich, PhD – ECE

Abstract:

Imaging techniques capable of monitoring large populations of neurons at behaviorally relevant timescales are critical to understand the brain and neural system. However, shot noise fundamentally limits the signal-to-noise ratio (SNR) and the optical resolution of in vivo neural imaging, necessitating advanced denoising methods to recover fast neuronal activities from noisy measurements in both spatial and temporal domains. A particular challenge for in-vivo neuronal activity denoising is the lack of “ground-truth” high SNR measurements which makes traditional supervised deep learning not applicable. In this dissertation, I present two generations of self-supervised deep learning frameworks for two-photon voltage imaging denoising (DeepVID) in low-photon regimes, which model noise distributions directly from the data, enabling effective denoising without reliance on ground-truth high SNR images. The first framework, DeepVID, is designed to infer the underlying fluorescence signal based on the temporal and spatial statistics of raw measurements. Through qualitative and quantitative analyses, I demonstrate its superior denoising capabilities in both spatial and temporal domains with improved single-pixel SNR and enhanced spike detection. To address the limitation in balancing spatial and temporal denoising performance, I develop the second framework, DeepVID v2, which achieves decoupled spatiotemporal enhancement tailored for low-photon voltage imaging. By integrating an additional spatial prior extraction branch into the DeepVID architecture and incorporating two adjustable parameters, DeepVID v2 effectively addresses the inherent tradeoff between spatial and temporal performance, enhancing its denoising capabilities for resolving both fine spatial neuronal structures and rapid temporal dynamics. I further demonstrate the robustness of DeepVID v2 across a range of imaging conditions, including varying SNR levels and extreme low-photon scenarios. These results underscore its potential as a powerful tool for denoising in vivo neural imaging data and advancing the study of neuronal activities within the brain.

Elected to Optica Fellow

Prof. Lei Tian is elected to Optica Fellow "For contributions to computational microscopy, including differential phase contrast microscopy, Fourier ptychography, optical diffraction tomography, and imaging in scattering".

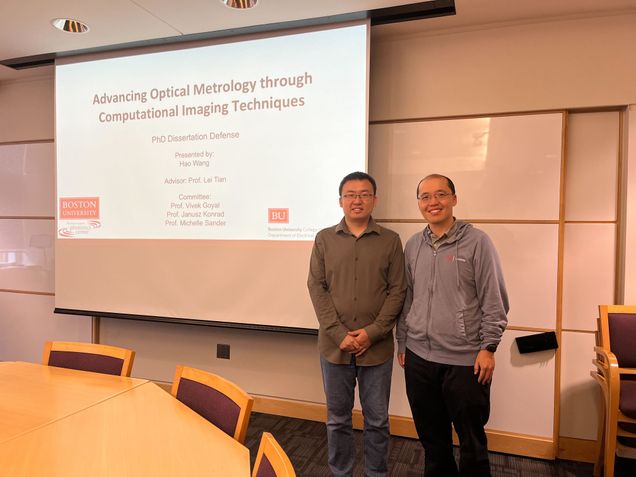

7th PhD of Tian lab: Hao Wang. Congratulations!

Wednesday, September 25, 2024

11:00 a.m. - 1:00 p.m

Advancing Optical Metrology Through Computational Imaging Techniques

Committee: Professor Lei Tian, Professor Vivek Goyal, Professor Janusz Konrad, Professor Michelle Sander

Abstract

Optical metrology, which uses light to precisely measure and characterize objects, is essential in industrial manufacturing, scientific research, and engineering design. Computational optical imaging techniques have leveraged novel optical systems, advanced algorithms, and increased computing power to accurately reconstruct and analyze the physical properties of objects, surpassing the capabilities of traditional optical metrology methods. This thesis explores the advancement of optical metrology through computational imaging techniques, focusing on the following three primary research areas.

The first research focuses on semiconductor chip surface topography measurement, which plays a critical role in various semiconductor industrial applications. Conventional optical metrology techniques often face challenges in balancing field-of-view (FOV) and spatial resolution. To overcome these limitations, I propose a novel technique termed Fourier Ptychographic Topography (FPT). FPT provides wide FOV, high resolution, nanoscale height reconstruction accuracy. Experimental validation on a sample semiconductor chip also demonstrates the robustness and superior performance of FPT compared to current standard optical profilometry methods.

In the second study, I extend my research from surface metrology to large-scale 3D particle detection from a single hologram. Traditional 3D particle localization methods often rely on single-scattering models, which are limited in their ability to accurately capture dense particle distributions at deep imaging depths. To address this limitation, I use a multiple-scattering beam propagation method (BPM) to model multiple scattering effect of 3D particle fields. I achieve robust detection of dense 3D particles and experimental results demonstrate that my approach provides significantly higher accuracy compared to conventional single-scattering models, particularly in scenarios with high particle densities and deep imaging depths.

Finally, I explore the application of deep learning methods for phase retrieval, a fundamental problem in computational imaging-based optical metrology. My previous research utilized physical models to simulate the optical imaging process. While these traditional model-based methods achieved state-of-the-art results in many ap-plications, the development of deep learning methods introduces new possibilities for solving highly ill-posed problems that were previously unsolvable. In my third study, I introduce the Neural Phase Retrieval (NeuPh) network, which enables flexible object representations and resolution-enhanced phase reconstruction from multiplexed Fourier ptychographic microscopy (FPM). Experimental results demonstrate the NeuPh networks scalability, robustness, accuracy, and generalizability in solving the highly ill-posed phase retrieval problem, outperforming existing methods and offering potential wide applications in optical metrology techniques.

Through these investigations, this thesis advances optical metrology by integrating computational imaging techniques, offering improved measurement accuracy, scalability, and robustness and paving the way for enhanced optical metrology methodologies in various applications.

6th PhD of Tian lab: Joseph Greene!

Computational Extended Depth of Field Fluorescence Microscopy in Miniaturized and Tabletop Platforms

Advisor: Professor Lei Tian

Committee: Professor Jerome Mertz, Professor Janusz Konrad, Professor Abdoulaye Ndao, Professor Xiaojun Cheng, Professor Tianyu Wang

Thursday, June 20, 2024

12:00 p.m. - 2:00 p.m.

Abstract

Fluorescence microscopy has emerged as a powerful solution to enable the direct study of biological compounds by labelling key structures and dynamics with optically responsive fluorophores to push fields ranging from medicine to biology to neuroscience. Due to its low-cost, flexible architecture and widefield imaging capabilities, one-photon epi-fluorescence microscopes have emerged as a standard platform for interrogating samples both in miniaturized and tabletop applications in real time. However, these systems are plagued by the low power of fluorescence samples, a lack of optical sectioning, susceptibility to scattering and shallow optical depth-of-field (DoF). As a result, collected signals exhibit low signal-to-noise and signal-to-background, high aberration and emerge from a constrained volume near the surface of the sample. To overcome these challenges, this thesis introduces several flexible and generalizable computational imaging frameworks to co-optimize custom optics and algorithms to encode target signals over a significantly enlarged extended depth of field (EDoF) then computational extract those signals from the high perturbations. This design paradigm fundamentally relies on concepts of pupil engineering, which uses a Fourier optics description of light propagation to design custom flat phase masks on the often-vacant pupil plane of a standard microscope to perform optical encoding.

This thesis begins by introducing a novel miniaturized EDoF-enabled miniscope, entitled EDoF-Miniscope, to motivate the utility of EDoF fluorescence imaging in a miniaturized form factor and on the complex environment of the brain. This project utilizes an innovative genetic algorithm to optimize a miniaturized and lightweight binary diffractive optical element (DOE) on the pupil plane to extend the DoF over 2.8x utilizing twin imaging foci. This project represents the first successful integration of diffractive optics into a miniscope to enable unprecedented control of the optical field inside the brain. To keep EDoF-Miniscope broadly accessible, I then utilize a simple off-the-shelf post-processing filter which enables the recovery of neuronal sources down to an SBR of 1.08. Next, I seek to improve upon the proposed framework in several capacities by designing a flexible 1-photon widefield tabletop testbed that exhibits comparable field-of-view (FoV), NA and aberrations to a miniscope entitled EDoF-Tabletop. This platform utilizes a spatial light modulator (SLM) on the pupil plane to rapidly deploy optimized phases without the need of manufacturing and aligning miniaturized optics. To improve both the optimization of novel pupil phases as well as the reconstruction algorithm, EDoF-Tabletop incorporates a novel end-to-end deep learning pipeline. Innovative physical modeling, optimization and initialization strategies are employed to make the framework computationally efficient, stable and reliably converge to the desired EDoF without the need for explicit initialization. By incorporating rigorous physical modeling, this thesis is able to perform cutting edge encoding and recovery of sources up to 140 microns (1.4 scattering lengths) deep in scattering media or over 400 microns deep in non-scattering samples without sacrificing the NA (NA=0.5), speed nor form factor.

Sponsoring a flexible optimization pipeline with a co-optimized reconstruction network and highly accurate physical simulator, the EDoF pipeline presented in this thesis offers a generalized solution for developing pushing fluorescence microscopy. By demonstrating this technology across miniaturized and tabletop platforms as well as across a broad range of fluorescence and biological samples, I believe that this framework will continue to push the practicality of 1-photon fluorescence imaging across a wide range of fluorescence imaging applications.

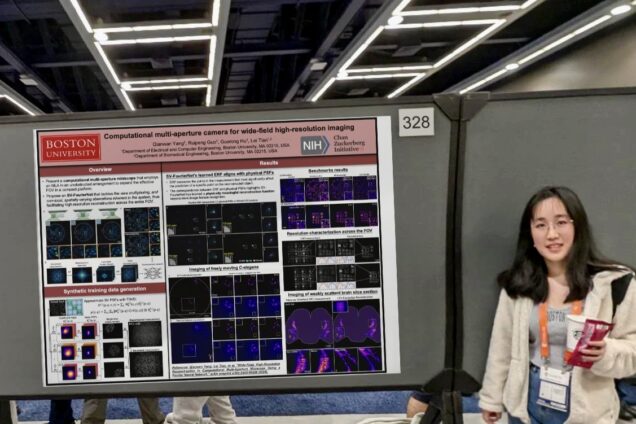

Jeffrey and Qianwan in CVPR CCD workshop

| Computational multi-aperture camera for wide-field high-resolution imaging | Qianwan Yang |

| Behind the Blurry Background: Practical Synthetic Features To Enable Robust Imaging Through Scattering | Jeffrey Alido |