Category: Article

Fear and Why We Enjoy it

Fear. It’s something all humans share in their arsenal of emotions and reactions. It’s a survival mechanism that we’ve evolved to have, and it’s what has kept us alive for close to up to 200 millennia. It’s a feeling that we both dread and revel in nowadays, especially during every spooky Halloween season. But why do we enjoy fear every fall season?

From horror movies to haunted houses, we all enjoy these activities, especially in groups of friends. Fear has turned from something so necessary for survival to something that’s required in for social acceptance. According to Kansas State University’s Don Saucier, since Halloween is a holiday that is so culturally embedded into our society, fear is practically interwoven in the very fabric of Halloween. It’s something that’s unavoidable. At this point in history, because we aren’t really fighting for our survival, participating in fear inducing activities is more than socially acceptable; it’s necessary especially because subconsciously we know we really aren’t in serious danger.

So what happens to your body when you experience fear? Sweating, temporary brain function reactions, respiration, and your heart and blood (among other reactions) are temporarily different during the time of the emotion and even after. Your amygdala releases chemicals that either make you confront your fear or run away. Your sweat actually smells differently from regular sweat and it’s said that it could enhance your alertness. Obviously heart rate and blood pressure increases which pump more blood to your muscles and lungs should your body choose to run away. Due to the increase blood flow, your respiration also increases so that there’s increase in oxygen to your blood. Reading all of this, you’d think, why in the world would we even enjoy these feelings when we react to fear? Well it really depends on the person. Those who seek out horror and fear probably have an adrenaline-seeking personality, and what better way to get that adrenaline than after watching a horror movie or getting frightened at a haunted house. The relief response after fear intensifies positive emotions and feel-good chemicals (like endorphins) are released into our brain.

To some extent, fear induces arousal when in an environment where the person feeling it feels out of danger – i.e. sweating, hand-shaking fun. So the next time you enter a haunted house or watch a horror movie, know that this survival mechanism no longer is a means for safety from danger but another way to induce excitement and fun with friends.

~Cindy Wu

Sources:

http://neurosciencenews.com/fear-psychology-social-standing-2956/

http://www.livescience.com/56691-the-science-of-fear.html

http://www.livescience.com/56682-the-anatomy-of-fear-infographic.html

http://online.csp.edu/blog/psychology/psychology-of-fear

Image Source:

https://www.maynoothuniversity.ie/sites/default/files/styles/ratio_2_3/public/assets/images/FEAR.jpg?itok=vBWci5ug

The Neuroscience Involving Habits

A habit is behavior that becomes automatic after regular repetition. The pervading thought used to be that habits are formed to free the brain, so it may perform other tasks, and to an extent, that is still true. However, a recent study conducted by MIT neuroscientists has found that a small region of the prefrontal cortex, where most thought and planning occurs, is devoted to controlling habits – deciding which habits are switched on at a given time. In addition, the study shows that although habits may become deeply engrained, the brain’s planning centers can shut them off.

In order to simulate engrained habits, the MIT team trained rats to run a T-shaped maze. As the rats reached the turning point of the maze, they were given a tone indicating whether to turn left or right; when they chose correctly (turning left), they received a reward of chocolate milk and when they chose incorrectly (turning right), they received only sugar water. Showing that the rats were displaying a fully engrained habit, the researchers stopped giving the rats any rewards; the rats continued to run the maze correctly. The scientists also offered the rats chocolate milk mixed with lithium chloride, which causes light nausea; the rats stopped drinking the chocolate milk yet still turned left.

Then the researchers tried to see whether or not they could break the rats’ habit to run left by interfering with activity in the infralimbic (IL) cortex, a part of the prefrontal cortex. Although the neural pathways that encode habits are located in the basal ganglia - a set of deep brain structures involved in coordination of movement, cognition, and reward-based learning - it has been shown that the IL cortex is also necessary for such behaviors to develop. The researchers, using optogenetics, a technique allowing researchers to inhibit certain cells with light, turned off IL activity in the rats’ brains as they approached the turning point. As a result, the rats turned right (where the reward was now located) and later formed the habit of turning right even when cued to left. Inhibiting IL activity again, researchers found that the rats’ regained their original habit of turning left when cued to do so.

From the results of the study, the researchers found that the IL cortex is responsible for determining which habits are expressed, and that it favors new habits over old ones; habits are broken but not forgotten when replaced. The results offers hope for those suffering from disorders involving overly habitual behavior, such as obsessive-compulsive disorder.Although it would be too invasive to use optogenetic interventions to break habits in humans, the technology could potentially evolve to a point where it would be a feasible option for treatment.

~ Nathaniel Meshberg

Sources:

Understanding How Brains Control our Habits

Neuroscience and the Greatest Minds

The Pyramids of Giza is one of “the Seven Wonders of the World,” which required Ancient Egypt to utilize complex knowledge in mathematics and architecture to figure out how to construct such landmark from the beginning. Some beholders are in such disbelief that they believe a greater force than the ancient civilizations built it for unknown purposes. However, with the understanding of the human mind, we can tell that the Egyptian scholars and builders were at least smart and strong enough to build such awesome necropolis.

One of the key requirements to stay active, smart, and diligent is basically to stay healthy. One of the most important ways to stay healthy is through physically fitness. Cardiovascular exercise is high priority because evolutionarily our ancestors for the majority of the time used their legs to migrate and that part of the body was mostly used to keep the body running. Our brains are like computers where we need activities that grant us gratification to maintain our stress levels and keep the brain stimulated lengthening life-span and heightening mental and physical performances. Stress levels in fact cause much harm to our brain and body impairing our mental performances and immune systems.

One difference between a university student and ancient Egyptian scholar would most likely be how much each sit down and walk during recreational hours. No one would deny that our changes in technology greatly altered how much we sit to play with our computers as opposed to play sports outside the day for fun. Sitting in fact causes much harm to our health making us more prone to becoming lazy and corpulent which would make the ancient Egyptian scholars have greater capabilities if they were to exist in our time. Those scholars most likely had more fun playing sports that break their sweats since no monitors were there to drain their mental and physical health. But in reality, if we fight our tendency to be lazy and exercise as much as those of the ancient times, we would probably be valedictorians in the first-tier schools, earn six-digit salaries in great careers, create great achievements, and live much longer without any chronic diseases.

~ Dong Jun Yoo

Works Cited

"Are You Sitting Too Much?" YouTube. YouTube, n.d. Web. 17 Nov. 2015.

Medina, John. Brain Rules: 12 Principles for Surviving and Thriving at Work, Home, and School. Seattle, WA: Pear, 2008. Print.

"The Science of Laziness." YouTube. YouTube, n.d. Web. 17 Nov. 2015.

"The Upside of Isolated Civilizations - Jason Shipinski." TED-Ed. N.p., n.d. Web. 17 Nov. 2015.

How Dreams Are Shown Through Brain Activity

For the longest amount of time, sleep and dreams were a complete mystery. At best, only educated guesses could be made as to how and why we dream from scientists such as Sigmund Freud, who claimed that sleep was a “safety valve” for unconscious desires. Essentially, no concrete theory for the process of sleep could be made because scientists lacked the means of actually accessing the brain. In the 1950s, however, scientists made a breakthrough in the study of sleep when they discovered its various stages.

In 1953, researchers using electroencephalography (EEG) were able to measure human brain waves during sleep. In addition, they measured the movements of the limbs and eyes. From the results of these experiments, researchers were able to find that brain activity both increases and decreases during sleep. During the first hour of sleep, brain waves slow down, and the eyes and muscles relax. Heart rate, blood pressure, and temperature fall as well. Over the next half hour, however, brain activity drastically increases from slow wave sleep to rapid eye movement (REM) sleep, and brain waves observed during REM are similar to those observed during waking. As opposed to waking, however, atonia occurs, which is when the body’s muscles are paralyzed; the muscles that allow breathing and control eye movements are fully active, and heart rate, blood pressure, and body temperature increase. As sleep continues, the brain alternates between periods of slow wave sleep (divided into four stages, with brain activity increasing with each stage) and brief periods of REM sleep, with the slow wave sleep becoming less deep and the REM periods more prolonged until waking occurs. Approximately 20 percent of our total sleep is spent in REM sleep.

While dreams can occur during slow wave sleep, if one is awakened during its several stages, one will most likely recall only fragmented thoughts. Instead, most active dreaming occurs during REM sleep, when the brain is most active. During REM sleep, signals from the pons travel to the thalamus, which relays them to the cerebral cortex, the outer layer of the brain, and stimulate its regions that are responsible for learning, thinking, and organizing information (the pons also sends signals that shut off neurons in the spinal cord, causing atonia). As the cortex is the part of the brain that interprets and organizes information from the environment during consciousness, some scientists believe dreams are the cerebral cortex's attempt to “find meaning in the random signals that it receives during REM sleep.” Essentially, the cortex may be trying to interpret these random signals, “creating a story out of fragmented brain activity.”

~ Nathaniel Meshberg

Sources:

http://www.ninds.nih.gov/disorders/brain_basics/understanding_sleep.htm#dreaming

http://www.brainfacts.org/sensing-thinking-behaving/sleep/articles/2012/brain-activity-during-sleep

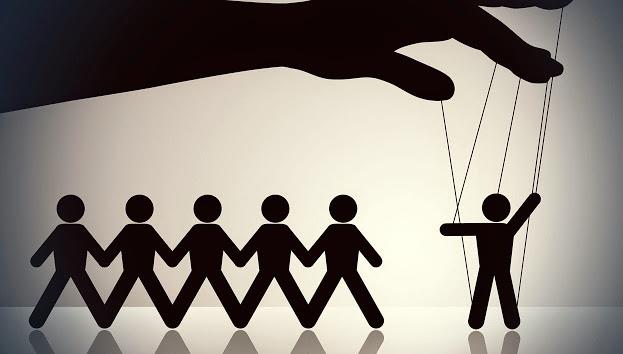

Conformity

Conformity is determined by a variety of situational and individual difference factors. Situational factors tend to increase when subjects are in groups that have been previously successful, when subjects are asked to give public responses while facing an opposition, when the stimuli are ambiguous or difficult, or when members are interdependent or unanimously opposed. Individual difference factors tend to increase when there are factors that decrease the subject’s certainty of their response or increase the subjective correctness of the group. (Back et al, 1965)

The behavior of conformity can be classified by whether someone’s behavior demonstrates a real acceptance of the norm, or whether the person is just going along with the group (Mezzacappa, 1995). The Asch conformity experiments in 1956 studied how participantswere influenced by conformity when they held an opinion that labeled them as the minority against a unanimous group opinion on unambiguous perceptual stimuli. The line judgment task in this experiment showed a standard line and comparison lines, and participants were asked to say the letter of the comparison line that matched the standard line out loud after hearing other people’s responses. Only 25% of the participants in the study gave correct answers after hearing other people’s erroneous answers. The study found that participants conformed due to either informational influence, which is the assumption that the crowd, or majority, must be correct, and the individual, or minority, must be wrong, or normative influence, which is the conformation to a crowd to avoid looking foolish. Personality characteristics relevant to deviation from norm can also be associated with conformity. Maslach (1974) and Maslach, Stapp, and Santee (1987) studied the trait of individuation and found that it is negatively correlated with conformity. Conformity behavior is also negatively correlated with intellectual achievement, perceptiveness of self, and security in social status. However, situational factors have a greater influence on conformity because individual difference factors tend to be more consistent.

~ Sophia Hon

Citations:

Back, K. W., Davis, K. E. (1965). Some personal and situational factors relevant to the consistency and prediction of conforming behavior. Sociometry, 28(3), 227-240.

Mezzacappa, E. S. (1995). Group cohesiveness, deviation, stress, and conformity.Dissertation Abstracts International: Section B: The Sciences and Engineering, 55(11), 5126.

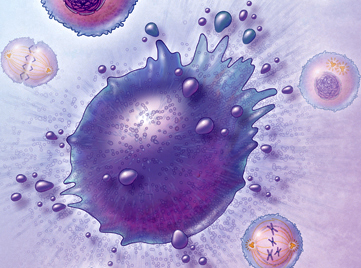

The Paradox of Cell Death

“Apoptosis” is Greek word that literally means “falling of the petals from a flower or leaves from a tree” [1]. This description contrasts with the typical textbook definition: apoptosis is programmed cell death, caused by the Diablo gene. The literal translation stresses the evolutionary significance and benefit of apoptosis. While apoptosis can be detrimental, it is also important to understand the reason why nature would select for such a seemingly negative process that in fact has its benefits.

Apoptosis is important for maintaining a balance between cell proliferation and cell death. Families of proteases called caspases are responsible for cell death. Initiator caspases activate other executioner caspases, which continue to activate other executioner caspases. The Bcl2 family of proteins can either activate or deactivate caspases. Specifically Bax and Bak facilitate cell death because they cause cytochrome C to be released, leading to the assembly of the apoptosome. Another process for cell death is called necrosis - when a plasma membrane ruptures thus spreading the contents of the dying cells. This is different from apoptosis because typically phagocytes recognize cells undergoing apoptosis and engulf the cells and its contents, thus preventing them damage to other surrounding cells [2].

A protein relevant to apoptosis is the transcription regulator protein p53, which codes for a CDK inhibitor protein called p21 (a Cdk inhibitor protein). In response to DNA damage in the G1 phase of the cell cycle, p53 levels increase and the generated p21 binds to the G1/S-CDK to stop the cell from reaching the S phase. In cases where DNA damage cannot be repaired, p53 causes apoptosis because it is better for the cell cycle not to proceed with the damaged DNA. If p53 is nonfunctional, this has serious consequences for the cell because its leads to the replication of damaged DNA, leading to mutation and the generation of cancerous cells [2]. I find it astonishing that an issue with the expression of one protein, in this case p53, can lead to development of life-threatening diseases like cancer.

Furthermore, apoptosis plays an interesting role in Alzheimer’s disease (AD). AD is caused by AB plaques, which are aggregates of a misfolded version of the amyloid precursor protein (APP). AB is generated when APP is cleaved by B-secretase (or BACE) in the amyloidogenic pathway. Current research shows that the death of neuronal and glial cells in the cerebral cortex and hippocampus is related to the cognitive decline that is characteristic of AD [3]. Only part of this neuronal cell death is caused by apoptosis, meaning the rest is mediated by necrosis and other different forms of apoptosis. Research implies that Bak and Bad rather than Bax (a part of the Bcl2 family) [3], therefore a potential AD treatment could involve a method for inhibiting the expression of Bak/Bad.

Cancer can be caused by a lack of apoptosis in harmful cells, thus allowing them to proliferate and cause tumors. Interestingly, the p53 gene is found in half of all human cancers [2]. Typically in tumors scientists have observed that the main regulators of the cell cycle are altered, thus impacting aspects of proliferative control such as cell cycle checkpoints and how the cell responds to DNA damage. Therefore, a possible cancer treatment could involve finding a way to induce tumor-selective cell death. However, a challenge with this approach is finding a way to do so without harming normal cell function or normal cell homeostasis [4]. If scientists could discover a molecule specific to tumor cells or molecules that are regulated differently in tumor cells than in normal cells, this approach could be potentially beneficial.

~ Srijesa Khasnabish

Sources

[1] Zhivotovsky, B (2002) From the Nematode and Mammals Back to the Pine Tree: On the asdfasdfDiversity and Evolution of Programmed Cell Death Cell Death and Differentiation 9, asdfasdf867-869 doi:10.1038/sj.cdd.4401084

[2] Alberts, Bruce (2014) Essential Cell Biology. New York: Garland Science

[3] Shimohama, S (2000) Apoptosis in Alzheimer’s Disease - An Update Apoptosis 5, 9-16

[4] Kasibhatla S, Tseng B (2003) Why Target Apoptosis in Cancer Treatment? Molecular asdfasdfCancer Therapeutics 2, 573

Free Will

Most people believe that they have complete control over every conscious decision that they make. However, research shows that in some cases, conscious decision follows the onset of neural activity preceding a voluntary, self-initiated movement or task. In an experiment published in 1983, the recordable cerebral activity, or readiness-potential, that precedes voluntary motor acts was compared to the time that subjects reported an intention to act. Subjects were asked to recall a “clock position” of a revolving spot when they became aware of their intention to move. The time of the conscious decision was then compared to the actual time of the movement shown on the electromyogram recorded from the muscle and the time of the readiness-potential that was recorded simultaneously. Results indicated that neural activities consistently begin before a conscious intention to act (Libet et al, 1983).

A more recent experiment investigated neural activity involved in more complex, non-motor tasks. In this experiment, subjects were asked to freely choose between adding and subtracting. A continuous stream of stimulus frames was used to map the timing of their decisions and responses. As soon as the subjects felt the urge to perform their chosen task, they first memorized the letter on the frame, and then performed either addition or subtraction with numbers presented on the two following frames, and picked their answer on the fourth frame. Results showed that the free decision to add or subtract could be interpreted from neural activity in the medial prefrontal and parietal cortex up to four seconds before the subject was consciously aware of making a decision. The fMRI showed an overlap between the choice-predictive signals in the different neural processes involved in performing motor acts and mental tasks that indicates a common point in the brain responsible for different types of decision-making (Soon et al, 2013). So if the human brain can begin preparations for spontaneous, voluntary movements before a person is conscious of making a decision to move, does free will really exist?

~ Sophia Hon

Sources:

http://brain.oxfordjournals.org/content/brain/106/3/623.full.pdf

https://dl.dropboxusercontent.com/u/1828618/PredictingAbstractIntentions.pdf

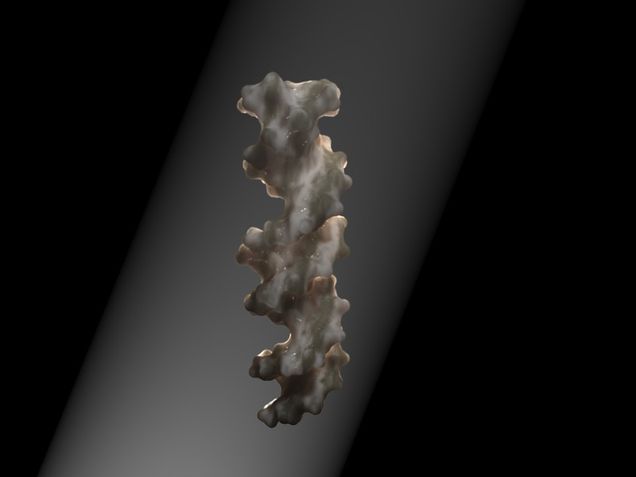

One Step Closer: New Alzheimer’s Gene Discovered

Alzheimer’s Disease (AD) is the most prevalent form of dementia, and sixth leading cause of death in the US, affecting 60-80% of individuals 65 and older. The hallmark symptom of Alzheimer’s Disease is serious memory loss attributed to widespread cell death and loss of cortical mass. Treatments are currently used to stop and slow the progression of the disease, and improve patients’ quality of life as there is no cure for AD.

The molecular basis of neurodegeneration in Alzheimer’s Disease is in amyloid beta plaque formation and the accumulation of neurofilaments. Studies have shown that aggregates of -amyloid form amyloid plaques that are predominantly found in the brains of Alzheimer’s patients. The accumulation of amyloid plaques in the brain impairs neuronal function and leads the eventual death of cells.

Recently, researchers have pinpointed a gene involved with the immune system, IL1RAP, that increases the risk of developing AD. In a genome-wide analysis of over 500 individuals, those with the IL1RAP variant were found to have a greater accumulation of amyloid plaque over a two year period - independent of the previously highly speculated AD-linked allele APOE ε4.

IL1RAP, or Interleukin-1 receptor accessory protein, is associated with the immune system, and often regulates microglial activity (“the brain’s ‘garbage disposal system’.”) Studies have shown that microglial cells gather around plaques and clear amyloid deposits. The presence of IL1RAP displays altered microglial activity, featuring faster accumulation of amyloid plaque, increased cognitive decline, and increased cortical decline. Focusing on the IL1RAP pathway may be imperative in inducing plaque destruction, therefore halting the progression of Alzheimer’s Disease. Researching this pathway may be key in eventually creating a cure.

-Kavya Raghunathan

Sources

(Original Study) GWAS of longitudinal amyloid accumulation on 18F-florbetapir PET in Alzheimer’s disease implicates microglial activation gene IL1RAP- http://brain.oxfordjournals.org/content/138/10/3076

New Gene Linked to Amyloid Plaque Buildup in Alzheimer’s Disease Identified- http://neurosciencenews.com/il1rap-alzheimers-apoee4-genetics-2826/

New Alzheimer’s Gene Identified- http://www.medscape.com/viewarticle/852556

What Is Alzheimer’s?- http://www.alz.org/alzheimers_disease_what_is_alzheimers.asp

Image by user Freesci via Wikimedia Commons

Pay attention, Millennials!

It seems like today, there isn't a single person without some type of smart phone. We look around, or more commonly, we look down at our screens, and everyone is either texting, tweeting, instagramming, or snap chatting. A short time ago, we had to actually have human interaction to give someone a message or to even just say hi. Now, we can Skype or Facetime and communicate with an LCD screen. That's not even the worst part. Recent studies have shown that our attention span has been rapidly decreasing because of this. On average, humans have the attention span of 8 seconds. Let me just repeat that really quick, 8 SECONDS. That is a shorter attention span than a goldfish. We cannot pay attention longer than a goldfish...

This short attention span is a huge problem in society. Children are failing to read books properly because they cannot hold their attention long enough to stay on the page. Adults cannot finish one project at a time because they get bored of it and want to move onto something else. The list goes on. So what are we going to do to stop this? We can't get rid of smart phones, which is the major cause of this loss of attention. We can't give every person Aderall, a medication used to treat attention deficit hyperactivity disorder, to keep them focused, as that would be inefficient and a waste of money. However, there is one thing that people can do for themselves to help increase their attention span: meditate.

When people hear the word mediation, they usually picture a person sitting on the floor with their eyes closed, their thumb and middle fingers touching, making the 'om' sound. Yes, this is one way to meditate, but what many people don't know is that there is a variety of other ways to meditate and be mindful. All you have to do is focus; pay attention to your thoughts and feelings in the current moment. I know that in today's society, asking someone to focus and pay attention is a very big request, but everyone is capabile of this, even if it's only for 8 seconds. The key to this is to do it a few times a day and increase your time spent meditating as each day passes. There have been countless studies done on mediation and attention. It has been proven that meditating at least once a day increases your attention span and improves many other things. How does meditation do this? Meditating every day increases cortical thickness in the brain. This has been proven through brain imaging before and after subjects went through different meditation programs. This increase in cortex leads to an increase in attention, which ultimately leads to better memory. When we are able to hold our attention longer, we retain more information so, simply put, we are able to remember more stuff.

There are so many positive effects of meditation. Not only does it lead to an increase in attention and memory, but it also reduces stress levels, increases relaxation levels, increases energy levels, decreases respiratory rate, increases blood flow, and so much more. Meditation definitely has the possibility of solving society's attention problem and so many more problems, but only if people take the short amount of time out of their day to do it.

-Gianna Absi

http://www.telegraph.co.uk/news/science/science-news/11607315/Humans-have-shorter-attention-span-than-goldfish-thanks-to-smartphones.html (ARTICLE ON ATTENTION SPAN)

http://www.ncbi.nlm.nih.gov/pmc/articles/PMC1361002/ (ARTICLE ON CORTICAL THICKNESS)

http://www.medpagetoday.com/Geriatrics/GeneralGeriatrics/34269 (ARTICLE ON BETTER MEMORY)

http://www.ineedmotivation.com/blog/2008/05/100-benefits-of-meditation/ (BENEFITS OF MEDITATION)

Fear Conditioning and PTSD

Ivan Pavlov is a name familiar to most, whether scientific expert or budding psychology student. Pavlov founded the basis of classical, or Pavlovian, conditioning, in which an animal or person learns to associate an initially meaningless stimulus in their environment with a stimulus that produces an automatic response, therefore eliciting the response when the newly meaningful stimulus is presented. Most famously, you might recognize Pavlov’s experiment where he conditioned dogs to associate sounds with the arrival of food, eventually producing a salivary response from the dogs with just the sound alone.

Following Pavlov, in 1919 a scientist named John B Watson became one of the first people to demonstrate fear conditioning: using Pavlovian conditioning to induce a fear response or a phobia. You may have heard of his notorious and ethically questionable “Little Albert” experiment, in which he induced phobias of rabbits and other normally pleasing objects in an infant through an association with loud, unpleasant noises.

Fear conditioning can be used for more than just questionable experiments on infants, though. In recent years, scientists have been using what we know about fear conditioning to study disorders like PTSD (Post Traumatic Stress Disorder), in hopes of finding new treatments.

PTSD, or post traumatic stress disorder, is a disorder where fear learned in a traumatic situation is triggered by seemingly neutral stimuli in ordinary, safe situations, producing detrimental physiological and behavioral responses. Studies have shown that the brain areas affected in PTSD - mainly the amygdala, hippocampus, and prefrontal cortex - are similar to the brain areas affected in rodents who have been fear conditioned. Evidence suggests that flawed synaptic plasticity, the ability for the brain to rewire itself, could be part of the cause of PTSD.

During fear conditioning, rodent’s brains rewire themselves to make a connection between a certain stimulus and a fear response. During extinction, the process in which a conditioned response to a stimulus goes away, the synaptic connections rewire themselves, no longer associating the certain stimulus to a fear response. In patients with PTSD, it is possible that the flawed synaptic ability hinders the fear response from being overwritten effectively. This results in the production of an otherwise unwarranted fear response in patients, when exposed to neutral stimuli.

By attempting to understand the synaptic basis for PTSD using animal models of fear conditioning, scientists can work toward developing new medications that effect chemical action at the synapses. Hopefully, these medications will be able to lessen the symptoms of PTSD in patients and eventually work toward more accessible treatment for flawed synaptic plasticity, and fear and anxiety disorders in general.

-Kristen Burke

Sources:

Heatherton, Todd, and Diane Halpern. "Chapter 6, Learning." Psychological Science. By Michael Gazzaniga. 5th ed. New York: W W Norton, 2013. 222-37. Print.

Mahan, Amy L., and Kerry J. Ressler. "Fear Conditioning, Synaptic Plasticity and the Amygdala: Implications for Post Traumatic Stress Disorder." Cell Press 35 (2012): 24-35. Web. 13 Oct. 2015.