Spatial Frequency Tuning Follows Scale Invariance in the Human Visual Cortex

Journal of Neuroscience (2026)

Emily Wiecek, Luis Ramirez, Michaela Klimova & Sam Ling

Our visual system can recognize patterns across many spatial scales. A fundamental assumption in visual neuroscience is that this ability relies on the putative scale-invariant properties of receptive fields (RFs) in early vision, whereby the spatial area over which a visual neuron responds is proportional to the spatial scale of information it can encode (i.e., spatial frequency, SF). In other words, the resolution of spatial sampling of a RF is assumed to be constant in the visual cortex. However, this assumption has gone untested in the human visual cortex. To address this, we leveraged model-based fMRI techniques that characterize the spatial tuning and SF preferences of cortical subpopulations sampled within a voxel across eight participants (five females, three males). We find that the voxel-wise ratio between peak SF tuning and RF size—expressed as “cycles per RF”—remains constant across visual areas V1, V2, and V3, suggesting that, at the population level, SF preferences are inversely proportional to the RF size, a tenet of scale invariance in early human vision.

Our visual system can recognize patterns across many spatial scales. A fundamental assumption in visual neuroscience is that this ability relies on the putative scale-invariant properties of receptive fields (RFs) in early vision, whereby the spatial area over which a visual neuron responds is proportional to the spatial scale of information it can encode (i.e., spatial frequency, SF). In other words, the resolution of spatial sampling of a RF is assumed to be constant in the visual cortex. However, this assumption has gone untested in the human visual cortex. To address this, we leveraged model-based fMRI techniques that characterize the spatial tuning and SF preferences of cortical subpopulations sampled within a voxel across eight participants (five females, three males). We find that the voxel-wise ratio between peak SF tuning and RF size—expressed as “cycles per RF”—remains constant across visual areas V1, V2, and V3, suggesting that, at the population level, SF preferences are inversely proportional to the RF size, a tenet of scale invariance in early human vision.

Dynamic estimation of the attentional field from visual cortical activity

eLife (2025)

Ilona Bloem, Leah Bakst, Joseph T McGuire & Sam Ling

Navigating around the world, we must adaptively allocate attention to our surroundings based on anticipated future stimuli and events. This allocation of spatial attention boosts visuocortical representations at attended locations and locally enhances perception. Indeed, spatial attention has often been analogized to a ‘spotlight’ shining on the item of relevance. Although the neural underpinnings of the locus of this attentional spotlight have been relatively well studied, less is known about the size of the spotlight: to what extent can the attentional field be broadened and narrowed in accordance with behavioral demands? In this study, we developed a paradigm for dynamically estimating the locus and spread of covert spatial attention, inferred from visuocortical activity using fMRI in humans. We measured BOLD activity in response to an annulus while participants (four female, four male) used covert visual attention to determine whether more numbers or letters were present in a cued region of the annulus. Importantly, the width of the cued area was systematically varied, calling for different sizes of the attentional spotlight. The deployment of attention was associated with an increase in BOLD activity in corresponding retinotopic regions of visual areas V1–V3. By modeling the visuocortical attentional modulation, we could reliably recover the cued location, as well as a broadening of the attentional modulation with wider attentional cues. This modeling approach offers a useful window into the dynamics of attention and spatial uncertainty.

Navigating around the world, we must adaptively allocate attention to our surroundings based on anticipated future stimuli and events. This allocation of spatial attention boosts visuocortical representations at attended locations and locally enhances perception. Indeed, spatial attention has often been analogized to a ‘spotlight’ shining on the item of relevance. Although the neural underpinnings of the locus of this attentional spotlight have been relatively well studied, less is known about the size of the spotlight: to what extent can the attentional field be broadened and narrowed in accordance with behavioral demands? In this study, we developed a paradigm for dynamically estimating the locus and spread of covert spatial attention, inferred from visuocortical activity using fMRI in humans. We measured BOLD activity in response to an annulus while participants (four female, four male) used covert visual attention to determine whether more numbers or letters were present in a cued region of the annulus. Importantly, the width of the cued area was systematically varied, calling for different sizes of the attentional spotlight. The deployment of attention was associated with an increase in BOLD activity in corresponding retinotopic regions of visual areas V1–V3. By modeling the visuocortical attentional modulation, we could reliably recover the cued location, as well as a broadening of the attentional modulation with wider attentional cues. This modeling approach offers a useful window into the dynamics of attention and spatial uncertainty.

Attention Alters Population Spatial Frequency Tuning

Journal of Neuroscience (2025)

Luis Ramirez, Feiyi Wang & Sam Ling

Spatial frequency (SF) selectivity serves as a fundamental building block within the visual system, determining what we can and cannot see. Attention is theorized to augment the visibility of items in our environment by changing how we process SFs. However, the specific neural mechanisms underlying this effect remain unclear, particularly in humans. Here, we used functional magnetic resonance imaging to measure voxel-wise population SF tuning (pSFT), which allowed us to examine how attention alters the SF response profiles of neural populations in the early visual cortex (V1–V3). In the scanner, participants (five female, three male) were cued to covertly attend to one of two spatially competing letter streams, each defined by low or high SF content. This task promoted feature-based attention directed to a particular SF, as well as the suppression of the irrelevant stream’s SF. Concurrently, we measured pSFT in a task-irrelevant hemifield to examine how the known spatial spread of feature-based attention influenced the SF tuning properties of neurons sampled within a voxel. We discovered that attention elicited attractive shifts in SF preference, toward the attended SF. This suggests that attention can profoundly influence populations of SF preference across the visual field, depending on task goals and native neural preferences.

Spatial frequency (SF) selectivity serves as a fundamental building block within the visual system, determining what we can and cannot see. Attention is theorized to augment the visibility of items in our environment by changing how we process SFs. However, the specific neural mechanisms underlying this effect remain unclear, particularly in humans. Here, we used functional magnetic resonance imaging to measure voxel-wise population SF tuning (pSFT), which allowed us to examine how attention alters the SF response profiles of neural populations in the early visual cortex (V1–V3). In the scanner, participants (five female, three male) were cued to covertly attend to one of two spatially competing letter streams, each defined by low or high SF content. This task promoted feature-based attention directed to a particular SF, as well as the suppression of the irrelevant stream’s SF. Concurrently, we measured pSFT in a task-irrelevant hemifield to examine how the known spatial spread of feature-based attention influenced the SF tuning properties of neurons sampled within a voxel. We discovered that attention elicited attractive shifts in SF preference, toward the attended SF. This suggests that attention can profoundly influence populations of SF preference across the visual field, depending on task goals and native neural preferences.

Heterogeneous effects of cognitive arousal on the contrast response in human visual cortex

Journal of Neuroscience (2025)

Jasmine Pan, Louis Vinke, Joseph McGuire & Sam Ling

While animal studies have found that arousal states modulate visual responses, direct evidence for effects of arousal on human vision remains limited. Here, we used fMRI to examine effects of cognitive arousal on the gain of contrast response functions (CRFs) in human visual cortex. To measure CRFs, we measured BOLD responses in early visual cortex (V1-V3) while participants (n=20, 14 females and 6 males) viewed stimuli that parametrically varied in contrast. To induce different cognitive arousal states, participants solved auditory arithmetic problems categorized as either Easy (low arousal) or Hard (high arousal). We found surprising diversity in the modulatory effects across individuals: some individuals exhibited enhanced gain of neural response with increased arousal, whereas others exhibited the opposite effect — a decrease in the gain of response with increased arousal. The pattern of BOLD modulation showed within-individual stability and was correlated with the degree of arousal-driven change in pupil size. Individuals who exhibited larger increases in pupil size with the arousal manipulation tended to show greater arousal-related decreases in the gain of visuocortical responses. We speculate that the polarity of the modulatory effect by cognitive arousal may relate to individual differences in cognitive effort expended in the high-difficulty condition, with individuals reaching different points on an underlying non-monotonic function.

While animal studies have found that arousal states modulate visual responses, direct evidence for effects of arousal on human vision remains limited. Here, we used fMRI to examine effects of cognitive arousal on the gain of contrast response functions (CRFs) in human visual cortex. To measure CRFs, we measured BOLD responses in early visual cortex (V1-V3) while participants (n=20, 14 females and 6 males) viewed stimuli that parametrically varied in contrast. To induce different cognitive arousal states, participants solved auditory arithmetic problems categorized as either Easy (low arousal) or Hard (high arousal). We found surprising diversity in the modulatory effects across individuals: some individuals exhibited enhanced gain of neural response with increased arousal, whereas others exhibited the opposite effect — a decrease in the gain of response with increased arousal. The pattern of BOLD modulation showed within-individual stability and was correlated with the degree of arousal-driven change in pupil size. Individuals who exhibited larger increases in pupil size with the arousal manipulation tended to show greater arousal-related decreases in the gain of visuocortical responses. We speculate that the polarity of the modulatory effect by cognitive arousal may relate to individual differences in cognitive effort expended in the high-difficulty condition, with individuals reaching different points on an underlying non-monotonic function.

How does orientation-tuned normalization spread across the visual field?

Journal of Neurophysiology (2025)

Michaela Klimova, Ilona Bloem & Sam Ling

Visuocortical responses are regulated by gain control mechanisms, giving rise to fundamental neural and perceptual phenomena such as surround suppression. Suppression strength, determined by the composition and relative properties of stimuli, controls the strength of neural responses in early visual cortex, and in turn, the subjective salience of the visual stimulus. Notably, suppression strength is modulated by feature similarity; for instance, responses to a center-surround stimulus in which the components are collinear to each other are weaker than when they are orthogonal. However, this feature-tuned aspect of normalization, and how it may affect the gain of responses, has been understudied. Here, we examine the contribution of the tuned component of suppression to contrast response modulations across the visual field. To do so, we used functional magnetic resonance imaging (fMRI) to measure contrast response functions (CRFs) in early visual cortex (areas V1 – V3) in 10 observers while they viewed full-field center-surround gratings. The center stimulus varied in contrast between 2.67-96%, and was surrounded by a parallel or orthogonal surround at full contrast. We found substantially stronger suppression of responses when the surround was parallel to the center, manifesting as shifts in the population CRF. The magnitude of the CRF shift was strongly dependent on voxel spatial preference, and seen primarily in voxels whose receptive field spatial preference corresponds to the area straddling the center-surround boundary in our display, with little-to-no modulation elsewhere.

Visuocortical responses are regulated by gain control mechanisms, giving rise to fundamental neural and perceptual phenomena such as surround suppression. Suppression strength, determined by the composition and relative properties of stimuli, controls the strength of neural responses in early visual cortex, and in turn, the subjective salience of the visual stimulus. Notably, suppression strength is modulated by feature similarity; for instance, responses to a center-surround stimulus in which the components are collinear to each other are weaker than when they are orthogonal. However, this feature-tuned aspect of normalization, and how it may affect the gain of responses, has been understudied. Here, we examine the contribution of the tuned component of suppression to contrast response modulations across the visual field. To do so, we used functional magnetic resonance imaging (fMRI) to measure contrast response functions (CRFs) in early visual cortex (areas V1 – V3) in 10 observers while they viewed full-field center-surround gratings. The center stimulus varied in contrast between 2.67-96%, and was surrounded by a parallel or orthogonal surround at full contrast. We found substantially stronger suppression of responses when the surround was parallel to the center, manifesting as shifts in the population CRF. The magnitude of the CRF shift was strongly dependent on voxel spatial preference, and seen primarily in voxels whose receptive field spatial preference corresponds to the area straddling the center-surround boundary in our display, with little-to-no modulation elsewhere.

The effects of emotional arousal on pupil size depend on luminance

Scientific Reports (2024)

Jasmine Pan, Xuelin Sun, Edison Park, Marine Kaufmann, Michaela Klimova, Joseph T. McGuire & Sam Ling

Pupillometry is widely used to measure arousal states. The primary functional role of the pupil, however, is to respond to the luminance of visual inputs. We previously demonstrated that cognitive effort-related arousal interacted multiplicatively with luminance, with the strongest pupillary effects of arousal occurring at low-to-mid luminances (< 37 cd/m2), implying a narrow range of conditions ideal for assessing cognitive arousal-driven pupillary differences. Does this generalize to other forms of arousal? To answer this, we assessed luminance-driven pupillary response functions while manipulating emotional arousal, using well-established visual and auditory stimulus sets. At the group level, emotional arousal interacted with the pupillary light response differently from cognitive arousal: the effects occurred primarily at much lower luminances (< 20 cd/m2). Analyses at the individual- participant level revealed qualitatively distinct patterns of modulation, with a sizable number of individuals displaying no arousal response to the visual or auditory stimuli, regardless of luminance. Together, our results suggest that effects of arousal on pupil size are not monolithic: different forms of arousal exert different patterns of effects. More practically, our findings suggest that lower luminances create better conditions for measuring pupil-linked arousal, and when selecting ambient luminance levels, consideration of the arousal manipulation and individual differences is critical.

Pupillometry is widely used to measure arousal states. The primary functional role of the pupil, however, is to respond to the luminance of visual inputs. We previously demonstrated that cognitive effort-related arousal interacted multiplicatively with luminance, with the strongest pupillary effects of arousal occurring at low-to-mid luminances (< 37 cd/m2), implying a narrow range of conditions ideal for assessing cognitive arousal-driven pupillary differences. Does this generalize to other forms of arousal? To answer this, we assessed luminance-driven pupillary response functions while manipulating emotional arousal, using well-established visual and auditory stimulus sets. At the group level, emotional arousal interacted with the pupillary light response differently from cognitive arousal: the effects occurred primarily at much lower luminances (< 20 cd/m2). Analyses at the individual- participant level revealed qualitatively distinct patterns of modulation, with a sizable number of individuals displaying no arousal response to the visual or auditory stimuli, regardless of luminance. Together, our results suggest that effects of arousal on pupil size are not monolithic: different forms of arousal exert different patterns of effects. More practically, our findings suggest that lower luminances create better conditions for measuring pupil-linked arousal, and when selecting ambient luminance levels, consideration of the arousal manipulation and individual differences is critical.

Differential cortical and subcortical visual processing with eyes shut

Journal of Neurophysiology (2024)

Nicholas Cicero, Michaela Klimova, Laura Lewis & Sam Ling

Closing our eyes largely shuts down our ability to see. That said, our eyelids still pass some light, allowing our visual system to coarsely process information about visual scenes, such as changes in luminance. However, the specific impact of eye closure on processing within the early visual system remains largely unknown. To understand how visual processing is modulated when eyes are shut, we used functional magnetic resonance imaging (fMRI) to measure responses to a flickering visual stimulus at high (100%) and low (10%) temporal contrasts, while participants viewed the stimuli with their eyes open or closed. Interestingly, we discovered that eye closure produced a qualitatively distinct pattern of effects across the visual thalamus and visual cortex. We found that with eyes open, low temporal contrast stimuli produced smaller responses, across the lateral geniculate nucleus (LGN), primary (V1) and extrastriate visual cortex (V2). However, with eyes closed, we discovered that the LGN and V1 maintained similar BOLD responses as the eyes open condition, despite the suppressed visual input through the eyelid. In contrast, V2 and V3 had strongly attenuated BOLD response when eyes were closed, regardless of temporal contrast. Our findings reveal a qualitatively distinct pattern of visual processing when the eyes are closed - one that is not simply an overall attenuation, but rather reflects distinct responses across visual thalamocortical networks, wherein the earliest stages of processing preserves information about stimuli but is then gated off downstream in visual cortex.

Closing our eyes largely shuts down our ability to see. That said, our eyelids still pass some light, allowing our visual system to coarsely process information about visual scenes, such as changes in luminance. However, the specific impact of eye closure on processing within the early visual system remains largely unknown. To understand how visual processing is modulated when eyes are shut, we used functional magnetic resonance imaging (fMRI) to measure responses to a flickering visual stimulus at high (100%) and low (10%) temporal contrasts, while participants viewed the stimuli with their eyes open or closed. Interestingly, we discovered that eye closure produced a qualitatively distinct pattern of effects across the visual thalamus and visual cortex. We found that with eyes open, low temporal contrast stimuli produced smaller responses, across the lateral geniculate nucleus (LGN), primary (V1) and extrastriate visual cortex (V2). However, with eyes closed, we discovered that the LGN and V1 maintained similar BOLD responses as the eyes open condition, despite the suppressed visual input through the eyelid. In contrast, V2 and V3 had strongly attenuated BOLD response when eyes were closed, regardless of temporal contrast. Our findings reveal a qualitatively distinct pattern of visual processing when the eyes are closed - one that is not simply an overall attenuation, but rather reflects distinct responses across visual thalamocortical networks, wherein the earliest stages of processing preserves information about stimuli but is then gated off downstream in visual cortex.

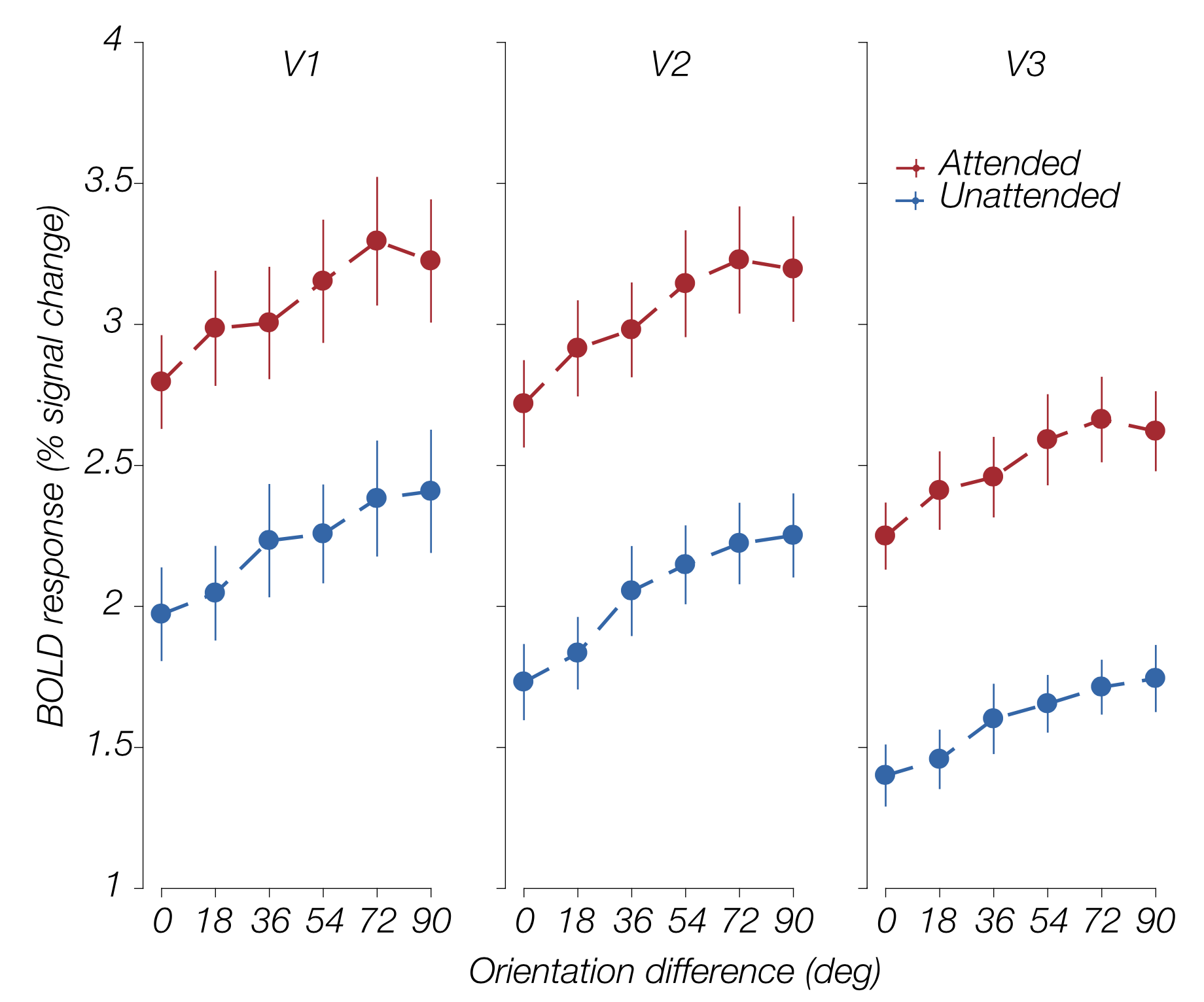

Attention preserves the selectivity of feature-tuned normalization

Journal of Neurophysiology (2023)

Michaela Klimova, Ilona Bloem & Sam Ling

Attention and divisive normalization both contribute to making visual processing more efficient. Attention selectively increases the neural gain of relevant information in the early visual cortex, resulting in stronger perceived salience for attended regions or features. Divisive normalization improves processing efficiency by suppressing responses to homogeneous inputs and highlighting salient boundaries, facilitating sparse coding of inputs. Theoretical and empirical research suggest a tight link between attention and normalization, wherein attending to a stimulus results in a release from normalization, thereby allowing for an increase in neural response gain. In the present study, we address whether attention alters the qualitative properties of normalization. Specifically, we examine how attention influences the feature-tuned nature of normalization, whereby suppression is stronger between visual stimuli whose orientation contents are similar, and weaker when the orientations are different. Ten human observers viewed stimuli that varied in orientation content while we acquired fMRI BOLD responses under two attentional states: attending toward or attending away from the stimulus. Our results indicate that attention does not alter the specificity of feature-tuned normalization. Instead, attention seems to enhance visuocortical responses evenly, regardless of the degree of orientation similarity within the stimulus. Since visuocortical responses exhibit adaptation to statistical regularities in natural scenes, we conclude that while attention can selectively increase the gain of responses to attended items, it does not appear to alter the ecologically relevant correspondence between orientation differences and strength of tuned normalization.

Attention and divisive normalization both contribute to making visual processing more efficient. Attention selectively increases the neural gain of relevant information in the early visual cortex, resulting in stronger perceived salience for attended regions or features. Divisive normalization improves processing efficiency by suppressing responses to homogeneous inputs and highlighting salient boundaries, facilitating sparse coding of inputs. Theoretical and empirical research suggest a tight link between attention and normalization, wherein attending to a stimulus results in a release from normalization, thereby allowing for an increase in neural response gain. In the present study, we address whether attention alters the qualitative properties of normalization. Specifically, we examine how attention influences the feature-tuned nature of normalization, whereby suppression is stronger between visual stimuli whose orientation contents are similar, and weaker when the orientations are different. Ten human observers viewed stimuli that varied in orientation content while we acquired fMRI BOLD responses under two attentional states: attending toward or attending away from the stimulus. Our results indicate that attention does not alter the specificity of feature-tuned normalization. Instead, attention seems to enhance visuocortical responses evenly, regardless of the degree of orientation similarity within the stimulus. Since visuocortical responses exhibit adaptation to statistical regularities in natural scenes, we conclude that while attention can selectively increase the gain of responses to attended items, it does not appear to alter the ecologically relevant correspondence between orientation differences and strength of tuned normalization.

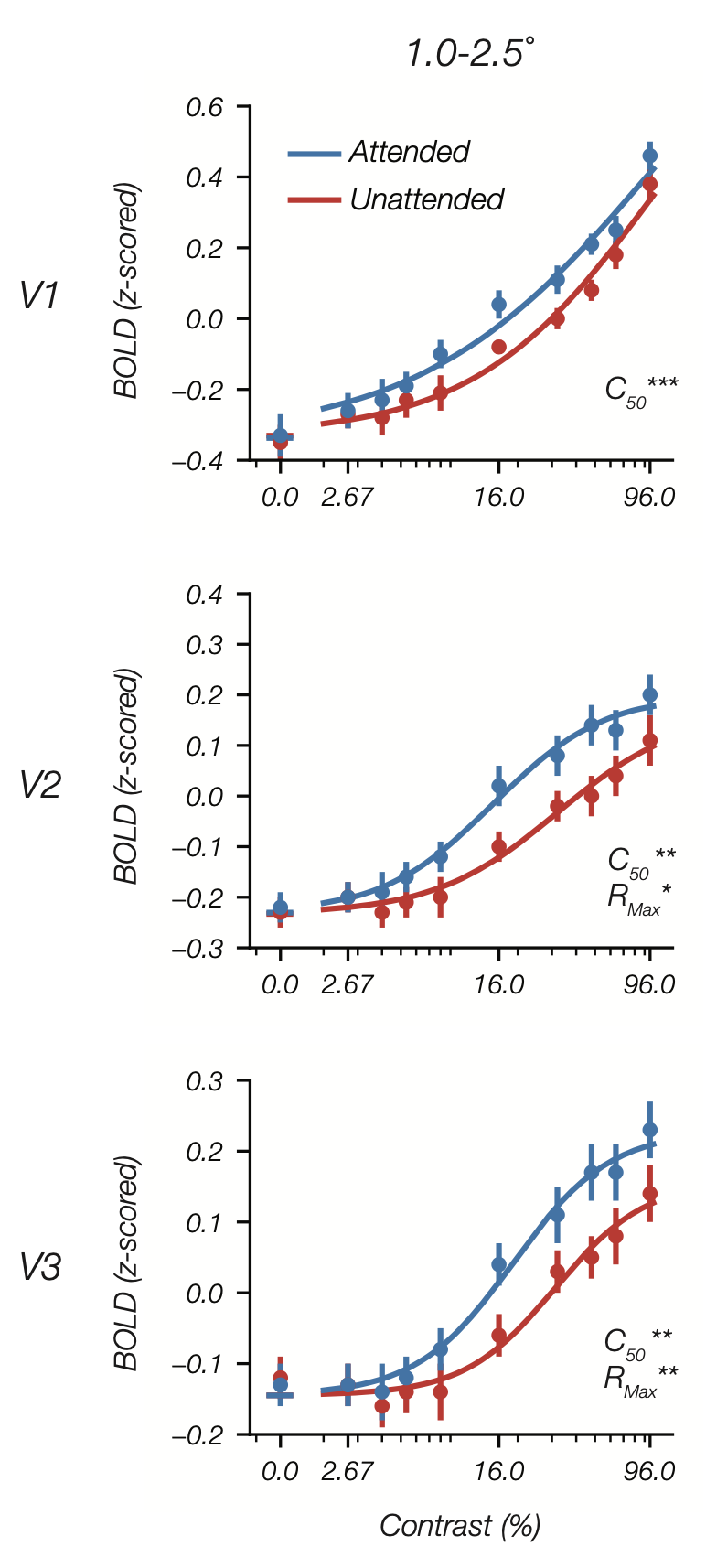

Feature-based attention multiplicatively scales the fMRI-BOLD contrast-response function

Journal of Neuroscience (2022)

Joshua Foster & Sam Ling

Functional MRI (fMRI) plays a key role in the study of attention. However, there remains a puzzling discrepancy between attention effects measured with fMRI and with electrophysiological methods. While electrophysiological studies find that attention increases sensory gain, amplifying stimulus-evoked neural responses by multiplicatively scaling the contrast-response function (CRF), fMRI appears to be insensitive to these multiplicative effects. Instead, fMRI studies typically find that attention produces an additive baseline shift in the blood-oxygen-level-dependent (BOLD) signal. These findings suggest that attentional effects measured with fMRI reflect top-down inputs to visual cortex, rather than the modulation of sensory gain. If true, this drastically limits what fMRI can tell us about how attention improves sensory coding. Here, we examined whether fMRI is sensitive to multiplicative effects of attention using a feature-based attention paradigm designed to preclude any possible additive effects. We measured BOLD activity evoked by a probe stimulus in one visual hemifield while participants (6 male, 6 female) attended to the probe orientation (attended condition), or to an orthogonal orientation (unattended condition), in the other hemifield. To measure CRFs in visual areas V1-V3, we parametrically varied the contrast of the probe stimulus. In all three areas, feature-based attention increased contrast gain, improving sensitivity by shifting CRFs towards lower contrasts. In V2 and V3, we also found an increase in response gain, an increase in the responsivity of the CRF, that was greatest at inner eccentricities. These results provide clear evidence that the fMRI-BOLD signal is sensitive to multiplicative effects of attention.

Functional MRI (fMRI) plays a key role in the study of attention. However, there remains a puzzling discrepancy between attention effects measured with fMRI and with electrophysiological methods. While electrophysiological studies find that attention increases sensory gain, amplifying stimulus-evoked neural responses by multiplicatively scaling the contrast-response function (CRF), fMRI appears to be insensitive to these multiplicative effects. Instead, fMRI studies typically find that attention produces an additive baseline shift in the blood-oxygen-level-dependent (BOLD) signal. These findings suggest that attentional effects measured with fMRI reflect top-down inputs to visual cortex, rather than the modulation of sensory gain. If true, this drastically limits what fMRI can tell us about how attention improves sensory coding. Here, we examined whether fMRI is sensitive to multiplicative effects of attention using a feature-based attention paradigm designed to preclude any possible additive effects. We measured BOLD activity evoked by a probe stimulus in one visual hemifield while participants (6 male, 6 female) attended to the probe orientation (attended condition), or to an orthogonal orientation (unattended condition), in the other hemifield. To measure CRFs in visual areas V1-V3, we parametrically varied the contrast of the probe stimulus. In all three areas, feature-based attention increased contrast gain, improving sensitivity by shifting CRFs towards lower contrasts. In V2 and V3, we also found an increase in response gain, an increase in the responsivity of the CRF, that was greatest at inner eccentricities. These results provide clear evidence that the fMRI-BOLD signal is sensitive to multiplicative effects of attention.

Congrats to Luis, recipient of the 2021 NIH D-SPAN Scholar Award!!

Luis Ramirez: 2021 D-SPAN Scholar

Luis Ramirez: 2021 D-SPAN Scholar

F99 Phase: Boston University | Sponsor: Sam Ling

Very exciting news! The NIH Blueprint Diversity Specialized Predoctoral to Postdoctoral Advancement in Neuroscience (D-SPAN) Award supports the pre- to post-doctoral transition of diverse graduate students. This two-phase award will facilitate completion of the doctoral dissertation and transition of talented graduate students (F99 phase) to strong neuroscience research postdoctoral positions (K00 phase), and will provide career development opportunities relevant to their long-term career goal of becoming independent neuroscience researchers. Currently, Luis is a PhD Candidate in the Graduate Program for Neuroscience at Boston University (BU), working with Dr. Sam Ling. His dissertation combines non-invasive human brain imaging, computational modeling, and psychophysics to understand the neurocomputational mechanisms that underly how attention regulates perception. In the long-term, Luis aims to investigate how perception and memory interact in visual cortex, and how attention facilitates this interaction. Moreover, as a first-gen, Afro-Latino student, Luis is committed to improving academia for historically excluded students, having led critical DEI committees and graduate student organizations throughout BU.