Deep Brain Stimulation

Over the past two decades, a neurosurgical technique known as Deep Brain Stimulation (DBS) has revolutionized the fields of both medicine and neuroscience. DBS has been able to accomplish incredible feats once deemed impossible, one of these being the extremely effective treatment of Parkinson’s Disease symptoms. DBS has also opened many doors to a bright future where debilitating neurological disorders and diseases may be eradicated.

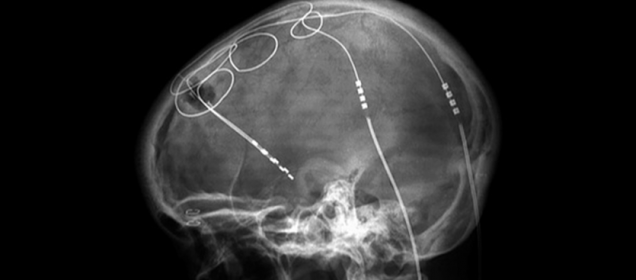

So what exactly is Deep Brain Stimulation? Deep Brain Stimulation essentially consists of sending electrical impulses to certain brain areas via surgically implanted electrodes, resulting in the removal of adverse neurological symptoms. The exact mechanism by which DBS exerts its effect on neurons is still not fully understood, although many theories have been presented. One popular explanation for the mechanism behind DBS is that electrode stimulation of certain brain areas induces changes in neuronal activity in the respective neural circuits that would ultimately change physiological and cognitive behavior.

An example of DBS in action can be seen through one of its most common applications, Parkinson’s Disease treatment. Parkinson’s Disease is characterized by voluntary motor deficits and symptoms including resting tremor, bradykinesia, postural instability, extreme difficulty initiating voluntary movement, and more. These symptoms are caused by the loss of dopamine producing neurons in an area called the substantia nigra, which is a constituent of a much more complex brain circuit within the basal ganglia that controls the initiation of voluntary movement. Needless to say, this neurodegenerative disease is very debilitating for the patient. When medications such as L-DOPA fail to alleviate Parkinson’s Disease symptoms, DBS steps in to save the day. Electrodes are surgically inserted into brain areas such as the internal Globus Pallidus or the Subthalamic Nucleus and are connected to an internal pulse generator (IPG) located in the patient’s chest or abdominal cavity. Upon the initiation of electrical impulses to those certain brain areas via the implanted electrodes, the patient’s symptoms disappear immediately and the patient is able to execute normal voluntary motor function without difficulty.

DBS holds vast potential and unending applications in the fields of medicine and neuroscience. DBS research is currently being done to investigate its effect in treating a plethora of neurological disorders including major depressive disorder, OCD, PTSD, addictions, Alzheimer’s Disease dementia, chronic pain, schizophrenia, eating disorders, and more. As mentioned, DBS has opened the doors to a new and hopeful future in which neuroscience research and medicine can eradicate the most debilitating of neurological disorders. Ironically, although DBS was created to provide an answer to the many questions plaguing neuroscientists and doctors today, it has instead prompted us to ask infinitely more questions and has further shown how little we know about our own brains. I will end our short discussion of Deep Brain Stimulation with a quote by Santiago Ramon y Cajal, the father of modern neuroscience himself, which sums up what DBS, and neuroscience itself, is all about: “To know the brain…is equivalent to ascertaining the material course of thought and will, to discovering the intimate history of life in its perpetual duel with external forces.”

Writer: Richard Kuang

Editor: Audrey Kim

Sources:

https://www.sciencedaily.com/

https://www.ncbi.nlm.nih.gov/

Klotho and the Aging Brain

Why does the brain deteriorate with age? Researchers might finally have found a potential cause. The klotho protein has been found to be associated with the aging brain. Specifically, higher levels of klotho have been associated with longevity of the brain. As you grow older, however, your brain’s klotho levels decrease, and researchers believe that this decrease may be related to age-related impairments.

Experiments conducted at the Gladstone Institute of Neurological Disease measuring klotho levels in mice show decreasing levels of klotho with age in the choroid plexus, a brain structure whose cells are responsible for producing cerebrospinal fluid and forming a barrier between the central nervous system and the bloodstream. In addition, experimentally reduced klotho levels in mice resulted in increased inflammation in the brain. The researchers thus found that klotho plays a role in maintaining the integrity of the blood brain barrier – a gatekeeper for protecting the brain from the peripheral immune system. This observation is particularly important because brain inflammation is a prominent feature of neurodegenerative diseases such as Alzheimer’s disease and multiple sclerosis.

Klotho has also been shown to be therapeutic and saves cognitive functions such as spatial learning and memory that were impaired as a result of aging or dementias. Researchers at UCSF injected a small fragment of the klotho protein into 18-month-old mice (about the same stage in the mouse lifespan as a 65-year-old human). What they observed was that a single injection of klotho significantly improved the ability of the mice to navigate and learn new tasks. The researchers then injected the protein into mice that were engineered to produce abundant levels of alpha-synuclein in order to induce Parkinson’s disease-like symptoms, namely movement disturbances. As a result of klotho administration, these mice displayed improvement in motor function as well as improvement in learning to navigate and explore new territories. All of these improvements were shown in spite of the mice brains still containing the toxic alpha-synuclein, indicating that the klotho protein appears to play a protective role against toxicity in the brain.

So, given this potential protective role for klotho, a next step for researchers could possibly be to develop a potential klotho-related treatment for individuals suffering from neurodegenerative diseases.

Writer: Nathaniel Meshberg

Editor: Audrey Kim

Sources:

https://neurosciencenews.com/aging-brain-10179/

https://www.sciencedaily.com/releases/2017/08/170808150006.htm

FACULTY FEATURE: Arash Yazdanbakhsh

Stepping onto Commonwealth Avenue the Wednesday morning after the Red Sox won the World Series was a thrill. Barricades were already set up along the streets, fans were lining up in their jerseys, and kids were jumping at the bit to see their heroes parade before their eyes through the city streets. And yet, it was a conversation at 677 Beacon St. with Dr. Arash Yazdanbakhsh that radiated much more passion than any trophy possibly could.

6,191 miles; that’s how far Dr. Yazdanbakhsh travelled from Iran to move to the United States. Already an MD in Iran before leaving, Dr. Yazdanbakhsh described practicing medicine in Iran as much different than in the United States. In Iran, an MD was expected to maintain an additional one or two other jobs in order to make a living. By submitting peer-reviewed papers to American professors via email, Dr. Yazdanbakhsh was noticed in the American research scene. Through his outgoing attitude, Dr. Yazdanbakhsh secured a spot at Boston University, where he ultimately obtained a Ph.D. in computational neuroscience.

When asked about role models, Dr. Yazdanbakhsh quickly mentions Albert Einstein. After reading one of Einstein’s books, Dr. Yazdanbakhsh was astounded by Einstein’s love of physics and mathematics. Dr. Yazdanbakhsh applied his love for physiology and found his passion in neuroscience, a field that has incredible room for innovation in combination with a subject as meticulous as mathematics.

When he first started in research, Dr. Yazdanbakhsh was involved with experiments on perception and visual experience that used eye tracking to record data from virtual reality. Tying this to reaction times, Dr. Yazdanbakhsh inferred what certain saccades meant when they occurred. This was done through the gathering of multitudes of data, then the deep analysis of the data through computer models and programs. In later research, Dr. Yazdanbakhsh became involved in researching other forms of neural modeling, the psychophysics behind Parkinson’s disease, and electrophysiology.

At Boston University, Dr. Yazdanbakhsh teaches NE 212, CN 510, and CN 530. NE 212 introduces students to the fundamentals of statistical research via MATLAB. This course focuses on explaining numerical integration programs through two settings: probability distributions and simulations of neural dynamics. CN 510/530 - Neural and Computational Models of Vision - delves into the constraints of mammalian visual processes through neural and computational models found using computer simulations.

In all his courses, Dr. Yazdanbakhsh praises the work of his students.

“The most gratifying thing is when a student of mine is thinking hard to solve a problem, and then steps outside the normal techniques and begin to think of novel ideas, more like a colleague of mine rather than a student,” Dr. Yazdanbakhsh said.

Dr. Yazdanbakhsh pairs hard thinking with creativity, and experimental design with thoroughness, all of which he describe as essential tools in a scientist’s toolkit.

For incoming neuroscience students, Dr. Yazdanbakhsh recommends building a broad view of the basis of neuroscience rather than specializing in a certain topic from the get-go.

“While there can’t be exact advice, observe as much as possible your freshman year,” Dr. Yazdanbaksh said. “Network. Talk to other students that are participating in research and find what you are interested in. This will allow you to find your niche.”

Dr. Yazdanbakhsh comments on the importance of being a part of such a distinguished neuroscience department at Boston University.

“There is a wide range of colleagues that are close together and allows for a good amount of cross-pollination. This allows for better work,” Dr. Yazdanbakhsh said. He also states that the faculty of BU is constantly giving effort, not only for their own research, but for their colleagues’ as well. “BU is the perfect place where you can walk down the street and the environment just recharges you.”

When he’s not in the classroom or in the lab, Dr. Yazdanbakhsh loves to be physically active. With a playlist ranging from Baroque to soft rock, Dr. Yazdanbakhsh enjoys tackling the gym for a workout. “The music adds a sense of rhythmicity to my workout.” When possible, Dr. Yazdanbakhsh tries to get outside and hike, as he places a lot of emphasis in connecting with natural objects, including sunlight and the outdoors.

Dr. Yazdanbakhsh’s tie to nature is evident when you walk in his office for the first time and recognize the flowering green plant hanging in the middle of the ceiling, sprawling in every direction. Dr. Yazdanbakhsh compares the vines of his plant to the dendritic spines of the brain.

“At the peak of its growth, this plant may have a few hundred leaves, so just imagine the brain is 100 times denser than this plant,” Dr. Yazdanbakhsh said. Dr. Yazdanbakhsh’s comparison highlights the detailed interconnections necessary for brain function. Like the plant and the brain, scientists around the world must come together and work in unison to answer the “serious questions that have yet been left unanswered.”

Who knows? Once that happens, there may be another parade rolling down Comm Ave, just this time, not for baseball.

Written by Trey Moore

Edited by Brian Privett, Emme Enojado

BU Study Finds First Evidence of Genetic Link to CTE

A new study out of the Boston University School of Medicine shows the first evidence of a genetic link to developing chronic traumatic encephalopathy (CTE). CTE is a neurodegenerative disease that may be diagnosed in patients with repeated head trauma. These patients typically exhibit cognitive and emotional issues including difficulty planning, emotional instability, substance abuse, impulsivity, and short-term memory loss. However, CTE can only be diagnosed postmortem, and there is currently no reliable way to predict who will develop this disorder.

This study, published in Acta Neuropathologica, is the first to show that there may be a genetic predisposition to developing CTE. Eighty-six brain samples from deceased American football players were examined for the presence of a missense mutation (rs3173615) in the TMEM106B gene. These players had all been diagnosed with CTE after their deaths. The mutation has been identified as playing a role in neuroinflammation and TDP-43 neurodegenerative diseases (such as ALS and Alzheimer’s). Thus, this specific mutation was of interest to researchers, who have been trying to find a possible genetic basis for the development of CTE.

Researchers identified that those diagnosed with CTE were more likely to have the missense mutation (rs3173615) on the TMEM106B gene than those without the disease. They also found that those with this gene variant were 2.5 more likely to have developed dementia. Researchers found that the presence of rs3173615 was associated with synaptic loss, dementia, and density of abnormal tau protein. However, these results were only seen when analyzing the brains of those diagnosed with CTE.

When compared to case-controls, the same associations were not observed. This study is the first to identify a possible genetic link to the development of CTE. The actual applicability of this study is limited, but it does provide possible paths for future research into the causes of CTE. There are likely to be many genes that contribute to the development of CTE, and as such, further research is needed. It is possible that this research could lead to preventative measures, diagnostic methods, and treatment of CTE; all of which are extremely limited as of now. CTE has been a popular topic in the news recently, with evidence accumulating that links head trauma from contact sports to CTE-like symptoms. A Boston University study in 2017 found that 99% of former NFL players’ brains that were studied showed signs of CTE. Evidence has also been produced to show that playing contact sports as a minor may contribute to cognitive deficits later in life. Studies like these have led to some arguing that contact sports (especially football) need to be altered to mitigate the risk of head trauma. Such alterations may include better helmets or rule changes. Some have even argued that children should not play football because of the risk of future brain trauma may be too great. More studies like this one are needed to assess the validity of these arguments, but in the meantime, these studies have ignited the debate around contact sports.

Writer: Jayden Font

Editor: Lauren Renehan

Sources:

https://www.bostonglobe.com/metro/2018/11/03/study-hints-that-certain-gene-may-worsen-cte/ QORz6GjMsKCjqGvAOBblxN/story.html https://actaneurocomms.biomedcentral.com/articles/10.1186/s40478-018-0619-9 https://www.mayoclinic.org/diseases-conditions/chronic-traumatic-encephalopathy/symptoms-ca uses/syc-20370921 https://www.cell.com/trends/molecular-medicine/pdf/S1471-4914(08)00186-X.pdf http://www.bu.edu/cte/2017/07/25/bu-researchers-find-cte-in-99-of-former-nfl-players-studied/ http://www.bu.edu/cte/2017/09/19/study-suggests-link-between-youth-football-and-later-life-emo tional-behavioral-and-cognitive-impairments/

A Value in Old-Fashioned Memorization

With a computer or telephone in hand, it seems pointless to memorize simple facts, lists of things, poems, directions, dates or formulas. From an evolutionary standpoint before modern day technology, people would constantly have to exercise their mind and memorize what we currently leave the task for our computer or telephone in hand to remember. But schools are now switching their structures to provide students the skills to apply knowledge instead of reciting information, which seems logical. Except there may be a huge positive to memorizing what seems to be pointless information.

Memorizing information is the equivalent to lifting weights in the gym, but instead of building more muscle, the levels of acetylcholine increase in the brain. Acetylcholine is a neurotransmitter has been identified by scientists to keep the mind sharp; it is critical in creating and strengthening connections between neurons. People blame the lack in memory as a consequence of aging, but scientists are finding out it has more to do with how much the person is exercising the mind. More specifically, the brain produces acetylcholine when the person is exercising the mind, such as when a person is trying to pay attention1. Although many people's memories increasingly start to fail after their mid-40’s, elderly people who constantly exercise their minds show high levels of acetylcholine, and their memory, as a result, does not deteriorate as rapidly. High levels of acetylcholine also reduce the risk of dementia; for example, cholinesterase inhibitors, which inhibit proteins that degrade acetylcholine and consequently lead to higher acetylcholine levels, have been used to slow down the effects of Alzheimer’s1.

In response to this trend of memory loss, researchers in New York discovered five compounds that naturally reinstate optimal levels of acetylcholine: Alpha GPC, Huperzine A, Bacopa Monnieri, Lion's Mane Mushroom, and Ginkgo Biloba2. This formula was named RediMind. RediMind was created in order for people to use modern day technology without the consequence of drastically losing their memory through aging. After a placebo-controlled clinical trial by Princeton Consumer Reseach, the results showed that the group who took the RediMind drug had scored 45% better than the placebo group2. Another positive of RediMind is that it gives the brain a long-term boost for energy compared to short-term boosts of drugs like caffeine.

RediMind is now for sale but has not been reviewed by the FDA. This could one step closer to creating enhancers for superpower memorization, but this drug has not been tested enough to prove consistent improvement of memory and to be safe for the brain. So, for now, I would stick to memorizing directions, grocery lists, and more vocabulary words to work out my brain.

Writer: Lauren Renehan

Editor: Audrey Kim

Source:

- https://globenewswire.com/news-release/2018/07/24/1541146/0/en/Alzheimer-s-related-Study-B rain-Training-Upregulates-Acetylcholine.html

- https://www.nutreance.com/articles/redimind?utm_medium=google_display&utm_campaign=redimind_us_content&utm_source=neurosciencenews.com&utm_term=long%20term%20memory &gclid=EAIaIQobChMIg8z664a33gIVAhLTCh3CIg5VEAEYASAAEgJz_vD_BwE

FACULTY FEATURE: Mario Muscedere

For Dr. Mario Muscedere, it all started with animals. During the weekends and summers of his childhood, the Baltimore, Maryland native would rise with the sun and escape with his dog, a mutt and former stray, to explore the woods and streams surrounding his suburban neighborhood, not returning home to reality until the dark swallowed the day.

“I was one of those kids who had to be restrained if there was a dog, cat, or any kind of animal around,” Dr. Muscedere said. “I was turning over rocks, always begging to go to the zoo, anything I could get I could not get enough of.”

Now, Dr. Muscedere is a full-time lecturer, with roles in both the Undergraduate Program in Neuroscience and the Department of Biology at Boston University. Currently, he instructs BI/NE 545: Neurobiology of Motivated Behavior in the fall and BI 315: Systems Physiology and BI 542: Neuroethology in the spring. Although his intrigue with the interaction between animals and behavioral biology has been a constant in his life, he was not introduced to the field of neuroscience until he arrived at BU for his Ph.D.

“I graduated with a B.S. in Biology from the University of Maryland, so I didn’t really have any neuroscience experience until I came here,” Dr. Muscedere said. “I did my Ph.D. research in the Traniello Lab, and I thought I was just going to study termite behavior, because that’s what I was doing as an undergraduate. But the Traniello Lab was discussing a new project they wanted to explore- the physiology and neurobiology that underlies behaviors in ants. The lab was heading in that direction, and that was the first time I really started to become a neuroscientist.”

For his graduate research, he focused on studying the sensory, neuromodulatory, and behavioral mechanisms that support task performance of individual worker ants in cooperative colonies. During this time, he also learned how to perform basic neuroscience laboratory techniques, such as brain dissections and immunocytochemistry, to investigate the brain anatomy and neurochemistry of their ant subjects. Then, as a postdoctoral faculty fellow and lecturer for BU’s Undergraduate Program in Neuroscience, he assisted in the revamping of the undergraduate neuroscience major – planning and creating course themes and topics along with lab manuals and the curriculum.

“I did that for about three years, and then I got a job teaching at a small liberal arts college in Arkansas,” Dr. Muscedere said. “I worked there for three years- great school, great students- but decided to come back to Boston because it was just the right move. So when this job opened up, I went for it.”

Dr. Muscedere returned in September of 2017, making this year his second academic school year as a full time lecturer at BU. Here, he says that BU gives him the freedom to try new things, especially in terms of instructional strategies, whether that be clicker questions or starting new classes to give students interesting experiences. Additionally, as a lecturer, he is able to form meaningful academic relationships with students.

“The best part of my day is just sitting in office hours and having people come by and talking about the subject,” Dr. Muscedere said. “It can be hard to make those one-on-one relationships when you teach really big classes, but in some of my upper level classes that are about 15 students it’s a lot easier and that’s what I really like: having that personal effect on somebody’s career, having an ‘aha’ moment with them.”

He accredits this opportunity to have a personal effects with students to tight knit community of the neuroscience department.

“I think with the neuroscience program in particular, since it’s small we think a little more about undergraduate experience, whereas in some of the bigger departments where the divisions are more spread out, that’s harder to do,” he said. “So I think that it’s easier for us to get to know students than it is for some of the other programs.”

While his current focus is undergraduate education, he continues to work on research, working collaboratively with the Traniello lab and finishing up some of the projects he started in his previous job. Dr. Muscedere’s studies aim to understand how worker brain evolution may be linked to the behavioral, social, ecological, and life history variation that exists among species- investigating sensory deprivation and neuroplasticity, among other areas.

“How animals in social groups make decisions and think strategically… it’s something that applies to humans too,” he said.

For current students, he has one piece of advice.

“Think about what you might want to do when you graduate and set yourself up now to get where you want to go, as opposed to scrambling in the last two years,” Dr. Muscedere said. “So start reaching out to your professors, ask about research and shadowing opportunities, volunteering, and UROP projects. Build towards getting experience because that is what will help you get where you want to go.”

According to Dr. Muscedere, anybody who is college now for neuroscience is presumably going to witness incredible gains made in the next 30-50 years- because of this, going to graduate school for neuroscience opens up the opportunities to work on projects that are truly cutting edge.

“In many ways, the field of neuroscience is still in its infancy,” Dr. Muscedere said. “The central problem in neuroscience, or at least behavioral neuroscience, is how do we connect activity of neural circuits to behavior? That question is still almost wide open, and what better time to get involved than in the beginning?”

Written by: Emme Enojado

Editor: Yasmine Sami

The Role of Music in Neurodegeneration: How it Can Help

Music is all around us. It’s in our ears as we walk to class with our earbuds in. It’s in the cars we drive and the ubers we take. It’s in malls and grocery stores. It’s even infiltrated the smallest of spaces, like elevators in hotel lobbies. This ubiquity of music may make it lose its significance in our eyes, however, this is not the case for people suffering from neurogenerative diseases such as Alzheimer’s and Parkinson’s. Music plays an important role in the lives of these people. While they may find themselves lost in their own minds, music can help guide them to lucidity, even for a little bit. Clinicians and researchers are utilizing music therapy as a supplemental treatment for people who suffer from neurodegenerative diseases. This approach has been found to be extraordinarily beneficial in such patients. In fact, in his book Musicophilia, Oliver Sacks writes: “ music therapy with such patients is possible because musical perception, musical sensibility, musical emotion, and musical memory can survive long after other forms of memory have disappeared. Music of the right kind can serve to orient and anchor a patient when almost nothing else can.”1

One of the most prevalent neurodegenerative diseases is Alzheimer’s disease with 5.7 million Americans suffering from it in 20182. So far there is no absolute ‘cure’ for AD. It is caused by an accumulation of p-tau and neurofilaments in the brain which cause cell death and neurodegeneration in the hippocampus. Music therapy has been found to be an effective non-pharmacological approach to manage AD. A study by Arroyo-Anlló EM et al was conducted on self-consciousness in people suffering from mild to moderate AD where they played familiar music for one group of people and unfamiliar music for another group. They found that familiar music intervention resulted in improvement in some aspects of self-consciousness such as personal identity, affective state, moral judgements and body representation. The researchers suggested that the improvement in self-consciousness may be due to the enhancement of general cognitive state by familiar music3.

Another study investigated the effects of background music on autobiographical memory of those with mild AD and also found encouraging results. The investigators conducted Autobiographical Memory Interviews (AMI) in which they asked questions related to major events in the individual’s lives that spanned over childhood, early adulthood and recent life. They found that subjects that had music ‘Spring’ movement from Vivaldi’s ‘Four Seasons’ in the background during the interview had higher AMI recall scores especially for recent personal semantic memories. Subjects in the music condition had reduced state anxiety levels and therefore the researchers attribute the enhanced autobiographical recall to an anxiety reduction mechanism brought on by music4.

Music seems to have interesting effects on people who suffer from Parkinson’s disease as well. Parkinson’s is the second most common neurodegenerative disorder with approximately 60,000 Americans diagnosed with it every year5. It usually caused by cell death in the substantia nigra in the basal ganglia. This causes a depletion of dopamine in the brain which is responsible for the symptoms present in Parkinson’s such as gait abnormalities. Oliver Sacks makes another interesting observation in his book where he states, “The patient can regain a fluent flow with music, but once the music stops, so too does the flow. There can, however be longer-term effects of music for people with dementia – improvements of mood, behavior, even cognitive function – which can persist for hours or days after they have been set off by music.”6 Researchers have found some encouraging results in line with Sack’s conclusion. In a study conducted by Benoit et al, it was found that musically cued gait training showed improvement in gait, motor timing, and perceptual timing. They trained patients with Parkinson’s to walk to the beats of German folk music on their own but giving them exact instructions on how to do so. They found that not only did these patients show improvements in gait velocity and stride length, but this effect outlasted the duration of the training for up to one month7.

In the same study, they also found that music therapy has the ability to enhance perceptual timing. They assessed this using a tone duration detection task and found that the patients that had undergone musical intervention improved their performance in these tasks. The researchers state that both these effects may be attributed to a cerebello-thalamo-cortical tract which is activated by auditory cues and compensates for the dysfunction in the basal ganglia as the enhancement in perceptual timing is responsible for the improvements in the subjects’ gait 8.

While we may take music for granted, it can play a very important part in people’s lives – particularly those that have to live with neurodegenerative disorders like Alzheimer’s and Parkinson’s. Unfortunately, there’s no exact ‘cure’ for these diseases, but interventions such as music therapy can still help provide a unique approach to alleviate many of the debilitating symptoms presented by these disorders.

Writer: Farwa Faheem

Editor: Kawtar Bennani

Sources:

- Musicophilia by Oliver Sacks

- https://www.alz.org/media/HomeOffice/Facts%20and%20Figures/facts-and-figures.pdf

- https://www.hindawi.com/journals/bmri/2013/752965/

- https://search-proquest-com.ezproxy.bu.edu/docview/232495436?accountid=9676&rfr_id=info%3Axri%2Fsid%3Aprimo

- https://parkinsonsdisease.net/basics/statistics/

- https://www.frontiersin.org/articles/10.3389/fnhum.2014.00494/full

Unique Folding Patterns in Autism Brains

Recent studies have shown that the brains of children with Autism Spectrum Disorder (ASD) fold differently than a normal brain—either being unusually smoother or unusually convoluted depending on location and age. Researchers measure the development of neural tissue folds in the cortex as changes in the local gyrification index; a ratio which compares the area of the smooth outer surface with that of the inside the sulci. Using this information, researchers can understand the link between autism and the folds of a brain.

In a study at San Diego State University, it was found that school-age children and adolescents with autism had more intricately folded regions. The left temporal and parietal lobes, which are responsible for processing sound and spatial information, were shown to have these intricate folds in children with autism. Research also found increased gyrification in the right temporal and frontal lobes, which are responsible for decision making and motor skills. In contrast, a second study found that preschoolers with autism do not show this degree of intricate folding unless they had enlarged brains. Preschoolers with autism were also found to have an unusually smooth region in the occipital lobe (specifically in the region dedicated to recognizing faces). These studies, in juxtaposition, demonstrate that brain folding hints at the different developmental path that autism brains follow when compared to normal brains. According to Ruth Carper, a researcher at San Diego State, “many of the brain areas with exaggerated folding are among the earliest to develop folds during gestation.” Thus, the folding will increase in intricacy and convolution over time due to this developmental disruption. In another study at the University of California, Davis, researchers found that children with enlarged brains actually have a specific subtype of autism due to the fact that only children with enlarged brains exhibited this degree of increased and atypical folding. This study adds to the evidence that folding patterns depends on the development of the individual and where that individual lies within the autism spectrum.

It’s clear that ASD is a very complex subset of conditions and traits that are influenced by various genetic and environmental factors. A great deal of research is focused on identifying physical differences, such as brain folding patterns, which are present in the autistic brain. In doing so, resources may be found to aid and benefit a developing brain with ASD. Lastly, such research will only further our understanding of how all of our brains grow and evolve over time.

Writer: Ava Genovese

Editor: Farwa Faheem

Sources:

STUDENT FEATURE: Radhika Dhanak

College often emits the energy of a coffee shop on a Friday morning: the overwhelming presence of chaos, the necessity of caffeine, and the scarcity of places to fit in. In the midst of this hectic, congested, and high-strung environment sits the unperturbed and poised Radhika Dhanak, senior in the College of Arts and Sciences and current President of the Mind and Brain Society. She sips her small latte as she admires the silent presence of the trees outside the window and ignores the imposing energy that surrounds her. Radhika’s mind, however, is not as tranquil as her disposition, “sometimes I look at the trees and feel in awe, these things just grow,” she said. “I’m so insignificant, it really takes the pressure off.” She radiates the same serene, but calculating, energy from the comfort of the coffee shop as she does while delegating as MBS’s fearless leader.

Radhika’s childhood was just as dynamic and ever-changing as her mind. She lived a comfortable life of consistency in Dubai where her future could be seen in the shadow of her older brother and sister. This changed at the age of fifteen when she moved to Ahmedabad, India. “[Moving] was just this disruption, you know?” Radhika said. “It was like uprooting everything when you had just laid down the roots...It was letting go of everything that was familiar.”

In hindsight, she recalls feeling enlightened. “I wouldn’t trade [my experience] for anything else, I wouldn’t have done the things I did afterwards [if it hadn't happened], I wouldn't have learned to live and think the way I do now. Moving was necessary.”

Radhika attended two different academic institutions while she lived in India, and the two offered very different experiences.

“My first school wasn’t very pleasant,” she said. “By the second time I moved I really shut myself out and didn’t take full advantage of the opportunity that was in front of me. Now, I know to be more receptive to things and not just live in my head.”

Her second institution focused heavily on experiential learning, which taught her about passion, leadership, and empathy. Her director held the theory that schools are for students.

“When it comes to rules, we decide,” Radhika said. “You do the work that matters to you and you give back to society.” She carried this wisdom across the Atlantic to Boston University, where she now uses it to fulfill her three academic disciplines -- neuroscience, philosophy, and visual arts -- and to give back to the neuroscience community by serving as president of one of the most prominent academic organizations on campus.

As for why she decided to live a fifteen hour flight away from home, she offers the following recollection.

“I was planning to study in the UK, which is where my sister studied at the time, but I would’ve depended on her for everything and I really didn’t want that,” she said. “I wanted to learn on my own, so I applied to BU and forced myself again to learn from change.”

The biggest challenge presented itself in the form of her first year, and once she conquered it, she found one lesson to be very true.

“Every single semester presents a new challenge, you always come out of it thinking that you’ve figured it all out, and then the next one starts and you realize you really haven’t figured it out at all, it’s difficult.”

Her second year was comprised of constant questioning.

“I had many existential crises… I’d look outside and see people in cars and see people in buildings and I thought, why? What are we doing? Why are we doing this? What does this mean?”

These questions inspired her to take a class on existentialism and to declare a second major in the discipline that would give her much needed perspective.

“I started thinking: what do my actions mean? How do I make them purposeful? Am I supposed to be selfish and invested in my head?” she said. “It’s my responsibility to think better than that, to try to change something, to go where there is an imbalance of access and resources.”

Her junior year became a result of her enlightenment: she became MBS secretary, an LA, a research assistant, and a peer mentor, declared a minor in visual arts, and took five classes each semester.

Though Radhika currently works in the Reinhart Lab at Boston University -- a lab that seeks to understand the nature of visual perception and cognition in the healthy adult brain and how it is affected by aging and neuropsychiatric illnesses -- her primary goals in life are not necessarily career oriented.

“I think my primary goal is to figure out what life means to me and understand how I can live it well,” she said. “In everything that I do, I focus on figuring out what I needed to learn from that situation and try to expand my understanding of life, people, and myself. If you’re looking to learn, you can learn from anything, so I hold on to neuroscience, I hold on to philosophy, I hold on to visual arts, then I add things outside of them to take full advantage of this time in my life, this place and its resources.”

Her advice to her fellow undergrads is to aim to understand what their work ethic is.

"Once you understand how you like to function, it's easier to focus on what you can and want to achieve.

“Then learn the skills necessary to succeed in your field: get lab experience, take upper level classes, force yourself to reflect, When you’re in a field like neuroscience you forget your passions because you might be so focused on doing the most competitive thing within the field; no, do what makes sense to you because then it isn't a competition.”

In that moment, Radhika made it evident that the most placid people have some of the loudest, most active minds.

Writer: Stephanie Gonzalez

Editors: Emme Enojado, Enzo Plaitano, and Yasmine Sami

Don’t Reject Rejection

Everyday a person may be ignored by someone, not get a job or internship they wanted, not get invited somewhere, or not have their opinion factored into an important decision. The result of this is the person feeling unwanted or not valued. Multiple fMRI scans have shown that the brain processes rejection in similar parts of the brain that process physical pain. This feeling can escalate to depression, violence, suicide, or be covered up by the usage of drugs. So what’s the trick to overcome this feeling? Mindfulness.

Mindfulness is “maintaining a moment-by-moment awareness of our thoughts, feelings, bodily sensations, and surrounding environment, through a gentle, nurturing lens” (Kabat-Zinn). It is crucial to live in the present moment verses lingering on what could or could not have happened in the past or fearing what could happen in the future.

Dr. Chester and doctoral candidate Alexandra Martelli conducted a study looking at how specific brain circuits are able to help more mindful people cope with rejection, focusing on the connections between the ventrolateral prefrontal cortex (VLPFC), which inhibits negative emotions, with the amygdala and dorsal anterior cingulate cortex (DACC), which generates emotions, with the amygdala and dorsal anterior cingulate cortex (DACC), which generates questionnaire for the scientists to see how mindful they are. The participants returned two weeks later to play a ball-tossing game on a computer that was pre-programmed, but the participants were told that it was other students playing the game. The game started with an equal number of passes and ended by excluding the participant. During this, the participants were in an fMRI scanner, and after the game they were removed from the fMRI scanner and reflected on the experience with another questionnaire of agree and disagree statements.

The outcome of the study was that the people who were proven to be more mindful in the previous questionnaire showed less distress from being excluded during and after the game. The fMRI results showed that the more mindful people had less connections between the VLPFC with the amygdala and the DACC, and overall less activity in the VLPFC. This is due to their accepting the experience of rejection instead of suppressing it. When people overwork their VLPFC by trying to control their emotions or trying to change the way they think about the situations, distress and anger are able to build up, eventually being expressed in a negative way.

Researcher Gaelle Desbordes is currently taking fMRI scans of clinically depressed patients before and after an eight-week course in mindfulness-based cognitive therapy (MBCT) developed by Kabat-Zinn. This included focusing on their heart beats and then reflecting on their negative thoughts, while the control group completed muscle relaxation. Her goal is to better understand mindful meditation and what types of people it can benefit the most in order to provide an alternative way other than medication to treat depression and stress-related disorders. Since mindfulness is commonly associated with the ancient traditions of meditation, Desbordes hope to find out what types of meditation help and the mechanisms behind it.

Mindfulness has shown a lot of other benefits to the body, though it is unknown the exact reasoning on how it works and is challenging to design and execute a well-run study on. Seminal studies have shown that after eight weeks of MBCT, the immune system, blood pressure, sleep, memory, attention, and decision-making are improved. Studies have also shown it helps veterans with PTSD. Ways to incorporate mindfulness into your day are paying attention to your breathing and all the senses that surround you, especially the body’s physical sensations. This can include driving, eating, listening to music, and walking. Focus on your current thoughts and emotions, with the realization that any negative thought or feelings are not permanent. Focus on the moments of the day that provided a positive mindset and provided you a sense of purpose. Write down and observe your thoughts to clear your head if it is hard to understand and focus on the stream of thoughts. Most importantly remember not to reject the next time you get rejected.

Writer: Lauren Renehan

Editor: Samantha Stoker

Sources:

https://greatergood.berkeley.edu/topic/mindfulness/definition#what-is

https://greatergood.berkeley.edu/article/item/can_mindfulness_help_your_brain_cope_with_rejection

https://www.psychologytoday.com/us/blog/the-harm-done/201810/how-the-mindful-brain-copes-rejection