Neuroscience-Inspired Perception, Navigation, and Spatial Awareness for Autonomous Robots

Project sponsored by the Office of Naval Research (ONR)

State-of-the-art Autonomous Vehicles (AVs) are trained for specific, well-structured environments and, in general, would fail to operate in unstructured or novel settings. This project aims at developing next-generation AVs, capable of learning and on-the-fly adaptation to environmental novelty. These systems need to be orders of magnitude more energy efficient than current systems and able to pursue complex goals in highly dynamic and even adversarial environments.

Biological organisms exhibit the capabilities envisioned for next-generation AVs. From insects to birds, rodents and humans, one can observe the fusing of multiple sensor modalities, spatial awareness, and spatial memory, all functioning together as a suite of perceptual modalities that enable navigation in unstructured and complex environments. With this motivation, the project will leverage deep neurophysiological insights from the living world to develop new neuroscience-inspired methods capable of achieving advanced, next-generation perception and navigation for AVs. The project will advance a science of autonomy, which is critical in enhancing DoD capabilities to execute missions using ground, sea, and aerial AVs.

The project’s multidisciplinary team of investigators includes renowned researchers from Boston University, the Massachusetts Institute of Technology, and five Australian-based universities collaborating on the allied AUSI-MURI Project. This research team combines experts in robotics and engineered systems with experts in neuroscience and brings technical approaches originating in the fields of control theory, computer science, robotics, computational intelligence, machine learning, mathematics, neuroscience, and cognitive psychology. Their synergy will lead to neurobiologically-inspired methods enabling breakthroughs in autonomy and robotics research. The project is led by Ioannis (Yannis) Paschalidis, director of the Center for Information and Systems Engineering, and professor in the College of Engineering at Boston University.

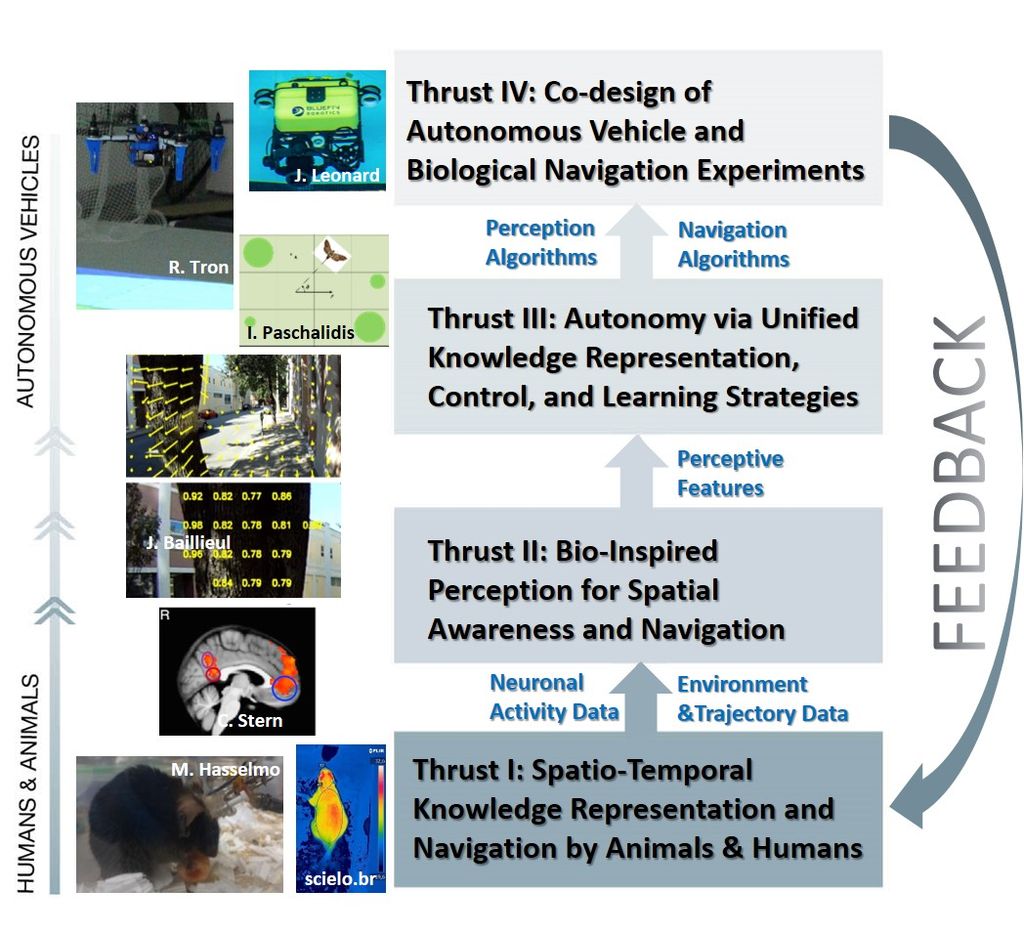

Research activities are organized around four tightly coupled thrusts:

Thrust I activities consist of observing and recording animal and human spatial awareness and navigation capabilities, correlating these with neuronal activity, and developing explanatory/predictive computational models. Experiments will involve rodents, ants, and humans. Human studies will use non-invasive functional Magnetic Resonance Imaging (fMRI) recordings.

Thrust II will develop neurobiologically-inspired methods for perception to enable goal-directed navigation. It will incorporate semantic representations, control actions to enhance perception (e.g., vision angle), metrics of information gain, detecting changes in the environment, and visual place perception. A key output of this thrust will be a suite of perceptive features to be used for developing autonomous navigation capabilities.

Thrust III will develop next-generation AV capabilities via unified knowledge representation, control, and learning strategies. The research will use features from Thrust II, determine how to represent the environment and past experiences, develop methods to extract navigation control policies from animal and human observations, and optimize these policies to achieve autonomous goal-directed navigation in new settings.

Thrust IV will leverage a plethora of experimental testbeds at participating institutions to design experiments that match animal/human experiments under Thrust I. The purpose is to test the effectiveness and efficiency of methods developed under the project in laboratory and real-world settings. Lessons from this Thrust will provide feedback to methodology development and motivate additional biological experiments.