The computer is any sequence of devices that can be instructed to automatically perform calculation and intelligent work. The computers’ capacity allows the computer work on the general series of operations which can be treated as doing a wide range of tasks.

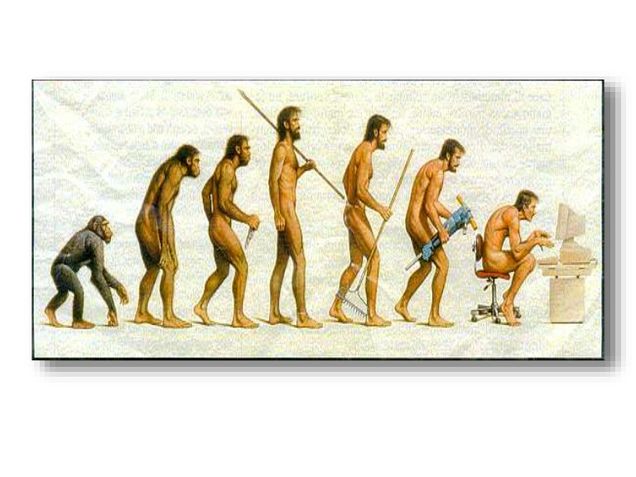

This computer is widely used in the modern society to control system for different industrial and consumer devices. Which can be involved with various equipment such as ovens, controllers and cell phones. Since ancient times, the early man-made tools are also used to calculate things. In the early days of the Industrial Revolution, many machines were built to account tasks, such as loom guidance.

According to the Oxford English Dictionary, the English writer Richard Braithwaite first used the term “computer” in a book titled “Brave Quest,” in 1613: “I like to read The Times’s most real computer, who shortened your day to a short paragraph.” The term “usage” refers to the person who makes calculations or calculations. Until the middle of the 20th century, the word “computer” still did not change any meaning than before. Then, starting from the late 19th century, “computer” becomes a different understanding as “a calculating machine”. (Dictionary, 2017)

After this, the computer started to bring people an image as “computing”. The use of this term refers to “computer” has begun in 1897.

Previous story

20th-century equipment has been used to help thousands of years of computing, mainly with the finger one by one. The earliest counting device may be a tally bar. Later in the Fertile Crescent, the recording aids included stones that represented the number of items that could be herds or cereals sealed in hollow uncentered clay containers. The use of counting sticks is an example. Chinese Abacus (The abacus number 6,302,715,408) was originally used for business calculation. As early as 2400 BC, Roman abacus was developed from the equipment used in Babylon. (Eleanor, 2008)

Development history

Charles Babbage, the Father of Computers, has conceptualized the first machine computer in early 19th century. Moving after this, In the 20th century, most computers need to be increased with direct mechanical models as the base of calculations. (Stanford Encyclopedia of Philosophy, 2005) After this, in 1938, the U.S. Navy had improved a sufficiently small electromechanical simulation computer that could be used on submarines. Similar equipment was developed in other countries during World War II. Now, in the recent society, the main principle of the modern computer was proposed in 1936, which called “Calculable Numbers.” Turing proposed a simple device, which was called as “general purpose computer,” now people called the “Universal Turing Machine”. The machine computers can calculate everything that can be calculated by executing instructions stored on tape.

Expand using—Internet

Since the 1950s, computers have been used to coordinate information between multiple locations. For example, the US military’s SAGE system as a system, which has involved with special-purpose commercial systems such as Saber. In 1970, engineers began to use mobile technology to connect distinct computers together. Technology now makes Arpanet possible to spread and evolve. Over time, the Internet had been commonly used in the different area, which was known as the normal Internet. The advent of the network involves redefining the nature and boundaries of the computer. (Internet Society, 2012)

Future expectation

There are many promising modern technologies, such as computers, digital devices and science computers, which make people get active research. Most computers are versatile and can calculate any computable function and are limited only by their memory capacity and speed of operation.

On the other hand, scientists still discovered other areas of the computer using:

- Quantum Computer and Chemical Computer

- Scalar processor and vector processor

- Non-Consistent Memory Access (NUMA) computers

- Register machines and stack machines

- Harvard architecture and von Neumann architecture

Furthermore, the other significant part of computer creating is the artificial intelligence. In order to use computers to solve more problems, scientists assumed that the computer can solve the problem programmatically, without regard to the efficiency of the code, substantive solutions, possible shortcuts or possible errors. The learning and adaptation programs are also a part of the new works of intelligence and standard working process. For separating different Products by using AI generally in two broad categories: rule-based systems and pattern recognition systems. Examples of pattern-based systems include speech recognition, font recognition, translation, and emerging online marketing.

Reference

Dictionary, O. E., 2017. Oxford English Dictionary.

Eleanor, R., 2008. Mathematics in Ancient Iraq,

Internet Society, 2012. “A Brief History of the Internet”,

Stanford Encyclopedia of Philosophy, 2005. “The Modern History of Computing”,