Research

The overarching goal of the lab’s work is to develop a physical theory of cognition. That is, an elegant set of equations that simultaneously describe observable behavioral data and the dynamics of large groups of neurons in many cooperating brain regions. This effort involves three kinds of activities. We develop theoretical expressions to describe cognitive computations that could take place in the brain. We analyze neurophysiological data from empirical collaborators and open source datasets to evaluate neural predictions of the equations. We compare predictions of the models to behavioral data. Recently with collaborators at the University of Virginia and Indiana University we have helped develop deep artificial neural networks incorporating equations from theoretical neuroscience.

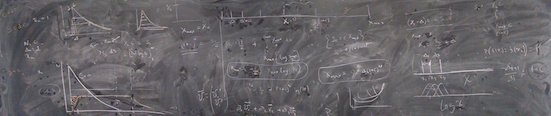

Neural theory of cognition

The basic hypothesis for neural computation is that the brain uses the same form of representation to represent information as a function of continuous variables and a relatively small set of data independent operators to manipulate those functions (Howard & Hasselmo, 2020). This hypothesis proposes that populations of neurons in many different brain regions code for the real Laplace transform of continuous variables. Neurons coding the real Laplace transform of a function over some variable should show exponentially-decaying receptive fields with a diversity of rate constants s. Other populations approximate the inverse Laplace transform, resulting in compact receptive fields over these spaces. These neurons map the rate constants onto the peaks of the receptive fields. Critically, this approach proposes that neural populations should be characterized by smoothly changing parameters. This diversity in parameters of the population is not noise, but inherent to the information processing capacity of the brain. Theoretical considerations (Shankar & Howard, 2013; Howard & Shankar, 2018) lead to the prediction that parameters should evenly tile not the continuous dimension represented, but the logarithm of that dimension. It has been known for some time that this relationship—known as a Weber-Fechner Law—applies to many neural and psychological dimensions.

The level of description provided by these equations allows one to easily move back and forth between descriptions of the behavior of many of neurons and cognitive models that can be evaluated with behavioral data. For instance, one can build cognitive models of a wide variety of memory tasks if one assumes a logarithmically-compressed temporal working memory of the past (Howard, et al., 2015, Howard, 2018). In addition to working memory, one can write out Laplace-domain models of classic evidence accumulation models used to account for data from simple decision-making tasks (Howard, et al., 2018). This raises the possibility that one could build computational models of behavior composed of the same equations—i.e., the same kind of neural circuit—throughout (Tiganj, et al., 2021; Tiganj, et al., 2019). Ongoing work attempts to build cognitive models for reinforcement learning as an estimate of future events as well as models of sequential planning and movement.

Neural representations of time and space and other variables

So-called “time cells” fire sequentially in the moments following presentation of a salient stimulus. Time cells were originally characterized in the rodent hippocampus (Pastalkova, et al. 2008; MacDonald, et al., 2011). Time cells were discovered around the time we first began working on a theory for representing the time of past events (Shankar & Howard, 2010) and the theory made several specific predictions (see Howard & Eichenbuam, 2013) that have subsequently been confirmed by many labs independently. For instance, different stimuli trigger distinct sequences so that populations of time cells actually code for “what happened when” in the past (Tiganj, et al., 2019; Cruzado, et al., 2020; Taxidis, et al. 2020). Recent empirical work in our lab has focused on testing more specific questions. Collaborative work with Elizabeth Buffalo’s lab at University of Washington has shown that neurons in entorhinal cortex decay exponentially with a variety of time constants (Bright, Meister, et al., 2020) implementing the equation for the real Laplace transform of time (see also Tsao, et al. 2018). In collaboration with scientists studying calcium recordings from rodents, including researchers in the Center for Systems Neuroscience at BU, we showed evidence suggesting that time cell-like sequences could persist for several minutes (Liu, et al., 2022). A recent preprint reporting collaborative work with Michael Hasselmo’s lab at BU shows that hippocampal time cells fire in a very special kind of sequence (Cao, Bladon, et al., 2021). The time at which each time cell peaks is evenly spaced on a logarithmic scale. This is as predicted by theory (Shankar & Howard, 2013; Howard & Shankar, 2018) and provides a mathematical connection between the neural representation of time in the brain and many other sensory variables that obey the Weber-Fechner Law.

Our ongoing work studies how temporal representations change systematically across brain regions. Moreover, we intend to test the hypothesis that neural representations of different types of information use the same kind of representation as time and space. We hope to study whether evidence accumulation in the brain uses the same kind of equations as the neural representation of past time. We are also extremely interested in neural representations of the time of future events.

Deep networks inspired by theoretical neuroscience

Whatever equations govern cognition have been shaped by countless eons of evolution, suggesting that they serve some adaptive purpose. Incorporating the equations that govern the brain into deep networks should endow these networks with abilities and properties that are not possible with other approaches and result in more robust and adaptive networks. The DeepSITH model (Jacques, et al., 2021) builds a deep network of logarithmically-compressed time cells. The DeepSITH model was compared to RNNs, which are widely used in both computational neuroscience and artificial intelligence. Although the models performed roughly similarly, the DeepSITH model dramatically outperformed generic RNNs on problems that required information to be maintained for a longer time. More dramatically, the SITHCon model adds a convolutional layer with shared weights to a deep network of logarithmically-compressed time cells and trained it on a series of time series classification tasks (Jacques, et al., 2022). For instance, networks would be trained to recognize the identity of spoken digits. Although all networks evaluated can perform this task, the SITHCon network generalizes in a human-like way. You can do this experiment with some friends: say the word “seven” as slowly as you can and see if they can still recognize what digit it is. Although not trained on faster or slower speech, SITHCon spontaneously generalizes (perhaps so did your friends). The scale-invariant property is possible only because this model uses the same equation—logarithmic compression—predicted by our theoretical work and that we observed in hippocampal time cells (Cao, et al., 2021), An active interest of the lab is integrating cognitive models using logarithmically-compressed Laplace domain representations into deep artificial neural networks.