Our Brain on Exercise

A large amount of research has demonstrated the power of exercise to support cognitive function, the effects of which can last for considerable time. An emerging line of scientific evidence indicates that the effects of exercise are longer lasting than previously thought – to the extent in which future generations can inherit these effects. The action of exercise on epigenetic regulation of gene expression appears central to building an “epigenetic memory” to influence long-term brain function and behavior. There have been new developments in the epigenetic field connecting exercise with changes in cognitive function, including DNA methylation, histone modifications, and microRNAs (miRNAs). The understanding of how exercise promotes positive long-term cognitive effects is crucial for directing the power of exercise to combat the issue of neurological and psychiatric disorders.

The positive effect of exercise on learning and memory in humans and animals has received abundant support. In older adults, exercise has been shown to improve cognitive performance and counteract the mental decline associated with aging, and these effects have been associated with modifications in hippocampal size. In one study, 21 women between the ages of 67 and 81 participated in exercise for 80 minutes per day. After 24 weeks, their hippocampal volume increased. In children, exercise has been found to be associated with cognitive performance: children who engaged in greater amounts of aerobic exercise generally performed better on verbal, perceptual, and mathematical tests. Recently, a meta-analysis study reported that a single bout of moderate aerobic exercise improves inhibitory control, cognitive flexibility, and working memory in preadolescent children and in older adults, indicating that beyond the well-known effects of long-term exercise on the brain, acute exercise also can be used as a tool for situations demanding high executive control. Interestingly, a single session of both aerobic and resistance exercise has been found to enhance memory consolidation in rats.

Epigenetic research has been centered on the analysis of changes on top of the genome that do not involve alterations in the nucleotide sequence. The two most studied epigenetic mechanisms are covalent modifications of DNA (methylation) or of histone proteins (i.e. acetylation and methylation), and their resulting effects on altering gene expression. The phosphorylation and methylation of histones are also tightly associated with regulation of learning and memory.

In agreement with its role in cognition, physical exercise can coordinate the action of genes involved in synaptic plasticity with resulting effects on memory preservation. For example, while exercise enhances the expression of genes (i.e. Bdnf, igf-1 and creb) that positively regulate memory consolidation, it downregulates genes (i.e. PP1and calcineurin) with a repressive role in these events. Evidence shows that DNA methylation is an important mechanism by which exercise affects gene expression. It is known that exercise differentially modulates the methylation pattern of specific CpG islands located at Bdnf gene, decreases hippocampal expression of DNMTs, attenuates the global methylation changes induced by stress, and increases Bdnf transcription through demethylation of its promoter IV.

It has been shown that the acetylation of histone proteins is a requisite for long term memory . For example, intrahippocampal injection of global HDAC inhibitors enhances long term potentiation. The pro-cognitive function of HDAC is partially attributed to their ability to increase histone acetylation. Interestingly, a previous study has shown that, like HDAC, physical exercise has the ability to transform a learning event that does not normally lead to a stable memory trace into a long-lasting form of memory (Intlekofer et al, 2013). Additionally, it was found that physical exercise increases histone acetylation and reduces HDAC expression and neural activity in the hippocampus.

In a recent study, Zhong et al. (2016) observed that exercise-induced memory improvements were associated with enhanced expression of cAMP response element-binding protein (CREB)-binding protein (CBP) in the hippocampus. Mechanistically, the recruitment of CBP triggers histone acetylation and the formation of a transcriptional complex at the promoters of many CREB-target genes to activate transcription. CBP mutant mice exhibit profound deficits in synaptic plasticity and LTM. Altogether, the aforementioned findings raise the idea that physical exercise promotes synaptic plasticity and memory improvements by altering the balance of HDAC enzymatic activity to favor a permissive state of chromatin, leading to the transcriptional activation of a myriad of genes with preponderant roles in cognition.

College students are known for being arguably the unhealthiest kind of humans. Between studying and managing social lives, exercise and self-care can be neglected despite their obvious importance. Sadly, this neglect might be prohibiting us from performing at our best as students. So, let me ask you this: how are you going to exercise today?

Writer: Kawtar Bennani

Editor: Nathaniel Meshberg

Sources:

https://www.nature.com/articles/npp2013104

https://www.sciencedirect.com/science/article/abs/pii/S0306452215011422

FACULTY FEATURE: Jeff Gavornik

As one enters the room on a rainy Monday afternoon, it’s difficult to escape the faint impression of mad science afoot. Papers and folders lay scattered over the floor and work desks in a secret pattern. In front of the spacious window sits a singular Dyson bladeless fan, eternally cool with its space age aesthetic. The whiteboard walls are plastered with various markings – equations, questions, drawings scientific and whimsical – that fill the room with scholarly energy. This is the office of Dr. Jeff Gavornik; and in the middle, tea in hand, sits the man himself.

Dr. Gavornik teaches NE203/BI325: Principles of Neuroscience, one of the core courses for neuroscience students at BU. He currently works as an Assistant Professor of Biology at BU and P.I. of his own Gavornik Lab. However, like most of us, Dr. Gavornik hasn’t always been here; his unique path to BU has been filled with interesting developments and detours. Growing up, his father was a pilot in the Air Force, and so Dr. Gavornik’s childhood was spotted with relocations from Alaska (his birthplace) to Arizona, Texas, Ohio, and back to Texas again. Ultimately, Texas became his ‘home,’ as he spent his high school years there before attending Rice University in the late 90s for undergraduate studies in Electrical & Computer Engineering & History.

While studying at Rice, Dr. Gavornik interned for two stints at MITRE in Bedford, MA, a non-for-profit research and development corporation partnering closely with the federal government. There, he worked on a project concerning acceptance testing for the production of the now well-known Iridium satellite network. His experience proved useful, as while his undergraduate studies were winding down, he was approached by Boeing’s space program for work on their own Iridium-related contract. Unfortunately (or luckily), Boeing’s Iridium contract fell through, and Dr. Gavornik was shifted to work on the International Space Station with NASA.

Of his time there, Dr. Gavornik recalls that “there were very interesting things about it,” including cool technical developments such as a “neat robot arm designed so it could reach over itself, from one part to another part, and sort of inchworm its way across the Space Station.” In addition, the program paid for his Master’s Degree at Rice in Electrical Engineering (2003), which he earned simultaneously. However, the day-to-day tedium proved oppressive. The ISS was an international effort on most levels, he explained, and so it was rife with the inevitable (and painful) sorts of debates and compromises which hinder a project’s tangible progress.

“Meetings and TPS forms, planning, sitting in these really long international meetings… falling asleep for days at a time, while people were arguing about whose responsibility these things should be – the day-to-day stuff wasn’t super exciting,” he said. It didn’t seem to get better either, as he remembers being “surrounded by people who were my age now, and they were doing the exact same stuff I was doing, and I was already sort of getting bored with it.”

Turning away from industry, Dr. Gavornik moved from Houston to attend graduate school at the University of Texas in Austin.

“I didn’t honestly even know what neuroscience was, and didn’t necessarily intend to.” he said. “I applied, and sort of my philosophy was, ‘I’ll take classes broadly, and whatever I think is interesting, I’ll do.’ Because I’d had the other experience of basically doing something I wasn’t super excited about for a job, and so I knew that wasn’t the most fun in the world.”

This approach still netted Dr. Gavornik a Ph.D. in Electrical Engineering in 2009, but along the way he found interest in neuroscience, recalling his introductory class in computational neuroscience with particular fondness to this day. After UT, Dr. Gavornik performed postdoctoral research at MIT for five years in what he described as a “pressure-cooker” environment, “where everybody just feels like they’re so wound tight and really have to do well, or the world’s going to end.” It wasn’t until 2015 that he finally made BU his home, due partly to fortuitous circumstance.

He relates:

“You’re sort of at the mercy of who has job applications posted when you’re at the right point in your career to be competitive, and so really the thing that brought me to BU was the fact that they had a position posted at the time I was applying, appropriate for what I was looking for (in systems neuroscience).” Dr. Gavornik said. “If they didn’t have the posting, I could have ended up at Purdue, or wherever. That said, I think it turned out to be a really great place for me, for a variety of reasons. One of them is that BU is really supportive of neuroscience right now as an area of expertise…. From the perspective of someone that’s a faculty member here, it’s great. They want you to do well, there’s real support for doing the experiments, and there’s support for having the equipment necessary.”

Beyond the institutional support, Dr. Gavornik also relishes the overall academic environment at BU.

“It’s a very nice combination, I think, of being a very good university with very good students interested in science, hard workers, but also who seem enthusiastic and happy to be here,” he said. “There’s a lot of places where it’s very smart, and it’s super aggressive. BU, in my experience, is not that way – it’s very smart, and it’s friendly about it.”

Bouncing off his praise of the student environment, we decided to ask Dr. Gavornik what advice he could provide to the students themselves. His answer got into more detail, and emphasized basic principles of scholarship and life in general.

“These things just don’t fall in your lap. You have to actively work for the things you want, and even the things you may not want but are important for you to have. Do the leg work.”

More specifically, he noted the significance of putting yourself out there for networking events, and the importance of having Plans A and B to work towards.

“You have to be realistic. With any sort of job where isn’t going to actually happen.” Correspondingly, even though he left industry for academia, Dr. Gavornik underscored the need to understand the opportunities in industry when considering there are a high number of people interested and technically qualified, unless you are an unusual individual or in unusual circumstances, you might just have to get lucky,” he said.…. “ You have to recognize that there are certain things out of your control, and recognize there’s a certain degree of randomness, and plan for the possibility that what it is that you want to do future with academic goals, or vice versa.

When one talks with Dr. Gavornik about the future itself, however, things become a bit hazier (and brighter). There’s a feeling, he explains, that neuroscience is on the edge of a very productive period resembling that of the physics revolution in the early 1900s, or NASA during its most exciting period of pioneering space exploration.

“In a sense, the entirety of our experience of the world is a consequence of how the neurons in our brain are wired together,” he said. “If we understood how the brain works, then in principle we could understand the entirety of the experience of being human.” What a golden age of scientific discovery that would be.

However, as Dr. Gavornik says, we still struggle to fully understand the function of even the simplest neurological systems, and thus “the big questions like ‘what is the nature of existence as defined by the brain’ are yet to be answered.” To know that these ‘big questions’ still lie as sleeping giants invokes a sense of wonder, and genuine inspiration – they are why Dr. Gavornik says he began studying neuroscience in the first place. Hopefully, the answers will reveal themselves soon.

Writer: Brian Privett

Editors: Emme Enojado, Enzo Plaitano, and Yasmine Sami

FACULTY FEATURE: Paul Lipton

Outside of Boston University, when the work hours come to a pause, Dr. Paul Lipton lives a life of adventure. He zooms past cars on his motorcycle, with his long time fantasy of becoming a race car driver in mind. He’s explored the beauty of the South Pacific Ocean when he scuba dived in Fiji and New Zealand. He’s climbed mountains and down glaciers in Alaska, and has set foot in European streets a dozen times.

But this life of adventure and excitement does not end when he bikes into campus every morning. Here, he is the director of the Undergraduate Program in Neuroscience, leading and guiding passionately curious minds. He is an associate director for the Boston University Kilachand Honors College and a faculty advisor for the Mind and Brain Society. Here, working alongside students and faculty, he instills an invaluable excitement for learning.

As a child, Dr. Lipton was constantly surrounded by conversations on human nature and the psychology of people, as his father was an English professor who taught about the psychology of adolescents through literature. This exposure developed a deep curiosity to understand how people work, but he found that just thinking about it from a psychological perspective was unsatisfying — he wanted to learn about the mechanisms behind the brain.

However, neuroscience was a fledgling field at the undergraduate level when he was in college, and Dr. Lipton graduated with a B.A. in Economics at SUNY Buffalo. Studying neuroscience did not cross his mind until his father connected him with one of his colleagues in the neurobiology department at Stony Brook University.

“In my senior year of college, I was trying to decide if I should apply to medical school or law school — those were the two options at the time,” Dr. Lipton said. “My father got my in contact with his colleague who happened to be a neurobiologist, and he told me about some really cool experiments that I had never even conceived of before.’”

After speaking with his father’s colleague, he also developed a newfound fascination for the field. Exploring the insights of the human mind through experimental methods mesmerized him, leading him to apply to the graduate program.

“It was not a very well informed decision,” Dr. Lipton said. “I knew very little about neuroscience, had never even taken a neuroscience class before, and had only taken one introductory psychology course. I applied blindly, and was accepted.”

From there, he studied the neurocircuitry that supports different types of learning and memory at a cognitive neurobiology laboratory at Stony Brook. When this lab transferred up to Boston, Dr. Lipton also moved to the city and stayed here till he got his PhD at Boston University in 2000.

Dr. Lipton returned to Boston University’s neuroscience department in 2003 as an academic director, and has been the director of the undergraduate program since 2013. As the director of the Undergraduate Program in Neuroscience, Dr. Lipton is responsible for overseeing the curriculum, managing course changes and new policies, and communicating with program faculty and the dean’s office. Outside of the neuroscience department, Dr. Lipton is one of two associate directors for the Boston University Kilachand Honors College, where he oversees and revises the curriculum and works with students on their senior keystone projects.

Every fall, Dr. Lipton teaches NE 101: Introduction to Neuroscience — the first core neuroscience course that majors take. This course on the biological basis of behavior and cognition covers topics from neuroanatomy and biology to the basics of neuropsychiatric disorders. Approximately 150 students fill the lecture room three times a week, and the room constantly brims with energy and engagement.

“Almost every week there’s something new in class, and the characters I see on a weekly basis make me laugh,” Dr. Lipton said. “Some of the individuals in that class make it a very fun place to be. They make it light, different — so the class never gets old.”

Outside of class, students come to Dr. Lipton’s office hours, where he answers any questions about material that students have and opens the space to discussions.

“I love the conversations I have with students,” Dr. Lipton said. “I love hearing hearing each and every individual student’s story — what makes their particular experiences both here and outside of the classroom and the university unique.”

Dr. Lipton also says that some notable experiences are when students put their ideas into action. These events include those hosted by BU Mind and Brain Society —such as BRAIN Day, an annual event that educates the Boston community on the wonders of the human mind, and Miracle Berries, an event that lets participants experience how taste perception changes by eating a berry — and independent programming.

“One year, a student wanted to put on a symposium about music and the brain, and he recruited four internationally recognized scholars on music and neuroscience, the Boston Symphony Brass Quartet to perform in the evening, and had about 300 people register for the event,” Dr. Lipton said. “To see one student’s dedication and then follow through for putting together a program like this was phenomenal.”

To Dr. Lipton, one of the reasons why the neuroscience program at BU is unique is because of the people: from the contagious enthusiasm of the students who tread Commonwealth Avenue to the dedication of the professors and faculty, who continuously put students and their learning first.

“The people make the place, they define the culture of the place, they are the heart of what makes up this place — and what I think is exciting about being here in Boston is the unbelievably rich community of neuroscience that’s going on between all the different universities,” Dr. Lipton said. “That exact excitement is what makes teaching never get old. Constantly seeing a new group of students express this amazement for the way the system works keeps me invigorated. It’s the enthusiasm of the students that’s really unlike anything I have ever seen.”

Written by: Emme Enojado

Editors: Yoana Grigorova, Stephanie Gonzalez, Enzo Plaitano

ALUMNUS FEATURE: Abhinav Prasad

Every Saturday, a first grade Abhinav Prasad would follow his father to a weekly math tutoring program, and as his father would run the program, which assisted various schools in the Fremont, California area, Abhinav would teach. Here, long before his mind was on college or careers, his small hand would glide along sheets of paper, clenching a #2 pencil that would write numbers, equations, and solutions. All of this work was not for himself, but for the sake of others. For Prasad, teaching, advising, and mentoring experiences were constantly weaved into his experiences as a child. He recognized his ability to explain concepts to people, and took advantage of this gift by taking on positions such as peer mentor and academic coach as an undergraduate student at Boston University. When Prasad, class of 2017, graduated with a Bachelors in Neuroscience in three years, he completed his circle of lifelong advising by taking on the role of the Undergraduate Program in Neuroscience Program Administrator.

Prasad tackles many responsibilities in his office on the second floor of 2 Cummington Mall. His responsibilities include advising, managing various parts of the neuroscience program such as scheduling, and financing - managing the budget and placing orders. To Prasad, the most rewarding experiences are when overwhelmed students come in, and he’s able to help them through the situation, plan what to do for the semester and show them how to use the tools they already have to succeed in college. “Before anything, it’s part of my job to work with students just to make sure they’re okay,” Prasad said, “I want to make sure that they’ve found a place here and are comfortable with their surroundings. I know that when I came here my freshman year, being at BU amongst a bunch of strangers made me feel like I couldn’t reach my goal to advance myself. So I want to make sure that students are motivated and just happy with being in the place that they are.”

Prasad graduated from a competitive high school, one with an unhealthy atmosphere of over-ambitious students. When choosing colleges, his options were down to two paths: either remain close to home and stay in California or come to Boston University. “I decided that I wanted to escape that bubble I grew up in, escape that crowd and come to BU to start fresh,” Prasad said. “When I was deciding where to go, I talked to current students, and they told me about how BU is a place with academically oriented students, which is exactly what I wanted: an atmosphere where other students would be willing to work with me and have healthy competition.”

According to Prasad, healthy competition was precisely what he found at BU. As he took the 3,000-mile journey from west to east coast, Prasad had a clear idea of how he wanted to transform himself as he moved from high school to college. “I was fairly unmotivated in academics in high school, believe it or not,” Prasad said. “Had my high school ranked, I probably would have been in the bottom 10% of my class. So, I looked at BU as a second chance to show myself and those around me that I am academically inclined and that I do want to succeed in college and my career.”

Although he faced culture and environment shock as he took his first steps on Commonwealth Avenue, one thing that helped him adapt to his new life was FY101, the 1-credit seminar-style course for new students at BU. “That was a class that introduced me to a lot of people, to BU, and to Boston,” Prasad said. “Coming to BU, where the people and environment are different, was a challenge, and FY 101 helped me acclimate and get over my initial fear of interacting with people.”

Prasad became involved in research during his 4th semester of college at the Center for Autism and Research Excellence, but after a couple of months, he decided to try out something different. At the end of the same semester, he contacted his NE 204: Introduction to Computational Models of Brain and Behavior professor, Arash Yazdanbakhsh, and began working in his lab that summer. This experience helped him find his senior thesis topic, which focused on creating a novel experience to quantify cognitive processes of perceptual priming or the identification of an incomplete word in a word-stem completion test. In the future, Prasad would like to compare the performance of healthy control subjects to the performance of individuals with Parkinson’s and Alzheimer’s Disease. The eventual end goal, he says, is to figure out whether or not this test would be able to identify early cognitive markers for these neurodegenerative diseases.

This year, Prasad is in the process of applying to medical school. “At the beginning of college, I came in with a set plan: I’m going to major in neuroscience, go to medical school, and become a neurosurgeon,” Prasad said. “But I think college has really helped me broaden my horizons. I’m not going to decide exactly what I’m going to do, and that’s okay. I’m sure I’ll go into medical school and have various clinician experiences that will draw me into various fields in medicine, but as for exactly what type of clinician work, I’m leaving that up to myself in four years.”

From years of experience and advising, Prasad’s outlook on life and college had changed from when he first stepped on Commonwealth Avenue. “College is a time for you to explore your interests,” Prasad said. “If you come in with a strict academic focus, exactly how you want your GPA, exactly where or what you want to do after graduation, sometimes that will bring on a lot of unnecessary pressure. Instead, just allow yourself to explore your interests and see where one course takes you.”

Writer: Emme Enojado

Editors: Yoana Grigorova, Enzo Plaitano, Yasmine Sami

Vitamin B6 May Improve Dream Recall

Vitamin B6 is a water-soluble molecule that is involved in many vital body functions such as metabolism of glucose, synthesis of neurotransmitters, immune function, and hemoglobin formation. Adults usually only need around 1.3 mg of vitamin B6 per day, but one study led by Pfeiffer found that taking 240 mg of vitamin B6 before sleep can improve dream recall. The study also concluded that inability to recall dreams can be due to a lack of vitamin B6 in the diet. Ebben et al. (2002) also found that ingesting high doses of vitamin B6 can intensify emotions, color, vividness, and bizarreness of dreams. In this study, 12 participants were asked to take placebo, 100 mg of vitamin B6, and 200 mg of vitamin B6 for five days each with two days washout period between each condition. They found that when people took 100 mg of vitamin B6, their dream salience score was 30% higher than the placebo, and when 200 mg of vitamin B6 was taken, dream salience score was 50% higher. Thus, there seems to be a dose-dependent relationship between vitamin B6 and dream salience. But why does vitamin B6 have this effect? Ebben theorized that vitamin B6 helps synthesize serotonin, which represses REM sleep in the first few hours of sleep, and REM sleep is responsible for dreams that people can remember. As a result, in the last few hours of sleep, there is a REM sleep rebound in which there is a more significant amount of REM sleep with intensified dreaming, leading to higher dream salience. Another theory proposed by Goodenough (1991) was that vitamin B6 also causes a lot of sleep disturbance, leading to frequent wake-ups during sleep. This gives the brain a chance to convert the short-term memory of the dream into long-term memory.

However, one study by Aspy et al. (2018) suggested that vitamin B6 does not increase dream saliency, but only increases the amount of dream content that is recalled. This study used a larger sample size of 100 participants with around 30 people in each group: placebo, vitamin B6, and B complex. Dream recall frequency and dream count were not statistically significant between placebo and vitamin B6 groups; dream recall frequency examines how many people in the sample recall any dreams and dream count tests how many dreams people remember. However, when using the Dream Quantity measure to test significant differences, the vitamin B6 group demonstrated a more significant dream content of 64.1% compared to the placebo group. They also found that vitamin B6 did not have any significant effects on sleep disturbance, sleep quality, or tiredness after waking, discounting Goodenough’s theory. On the other hand, the B complex group did show a significant decrease in sleep quality and increase in tiredness after waking, despite ingesting the same amount of vitamin B6. This indicates that one of the vitamin B counters the effects of vitamin B6; Aspy suggests that it is vitamin B1. This study seems to undermine the effects of vitamin B6 in dream salience and recall; however, there is a limitation to these findings due to the way the study was conducted. Aspy et al. (2018) used different participants in each conditional group, while Ebben et al. (2002) used the same participants in each conditional group. As a result, Ebben’s study can account for the subjective individual differences, and measure the differences more objectively. Nevertheless, Aspy did use a bigger sample size, which may make his data more reliable.

Although more future studies should be conducted to test the effects of vitamin B6 on sleep and dream saliency, there seems to be one strong indication from all these studies: vitamin B6 increases your ability to recall more dream content. The research also suggests that vitamin B6 aids in lucid dreaming, but again, further studies need to be conducted. If you cannot seem to remember any of your dreams after waking up, try increasing the intake of vitamin B6 in your diet with caution, and perhaps you will be able to recall more dreams.

Writer: Audrey Kim

Editor: Sophia Hon

Sources:

Ebben, M., Lequerica, A., & Spielman, A. (2002). Effects of pyridoxine on dreaming: A preliminary study. Perceptual and Motor Skills, 94, 135–140.

Pfeiffer, C. (1975). The sleep vitamins: Vitamin C, inositol, and Vitamin B-6. In C. Pfeiffer (Ed.), Mental and elemental nutrients. New Canaan, CT: Keats.

Goodenough, D. R. (1991). Dream recall: History and current status in the field. In S. J. Ellman & J. S. Antrobus (Eds), The mind in sleep: Psychology and psychophysiology (2nd ed.). Oxford, England: John Wiley.

Aspy, D. J. (2018). Effects of Vitamin B6 (Pyridoxine) and a B complex preparation on Dreaming and Sleep. Perceptual and Motor Skills. 0(0), p 1-12

https://ods.od.nih.gov/factsheets/VitaminB6-HealthProfessional/

Sitting for too long may be bad for your brain

Do you consider yourself a couch potato? According to a new study done at UCLA, sitting for prolonged periods of time may be harmful for your brain. Thirty five participants from ages 45 to 75 were recruited and asked about their physical activity patterns such as how many hours they spent sitting down during the previous week. The participants reported average sitting times of three to fifteen hours per day. Researchers then scanned their brains in an MRI and compared the sizes of their medial temporal lobe (MTL), a brain region important for learning and forming episodic memory.

They found that participants who spent more time sitting per day had thinner MTL structures and that sedentary behavior is a significant predictor of MTL thinning. They also found that every additional hour of sitting was associated with a 2% decrease in MTL thickness after adjusting for the subjects' ages. However, the researchers did not find any correlation between physical activity levels patterns and thickness of the structures, thus showing that physical activity is insufficient to offset the negative effects of sitting for extended periods. The finding that sedentary behavior is associated with reduced MTL thickness is consistent with studies showing extended sitting times increase the risk of heart disease, diabetes, and premature death. While the researchers focused on the hours spent sitting, they did not ask if the participants took any breaks during this period. They also could not say why sitting for extended periods is associated with MTL thinning. One theory is that if sitting for too long compromises the supplies of oxygen and nutrients the brain needs to stay healthy, then it would be reasonable to expect the brain to be unable to maintain its proper volume and start thinning.

For future studies, the researchers plan to follow a group of people for a longer amount of time in order to determine whether sitting causes the MTL thinning or other factors such as race, gender, and weight also play a role in brain health. MTL thinning is considered a precursor to dementia in middle-aged and older adults. The researchers believe that reducing sedentary behavior may prove fruitful in improving brain health in people at risk of Alzheimer's disease, a condition which reduces the volume of memory-making structures in the MTL including the hippocampus and the entorhinal cortex.

Writer: Nathaniel Meshberg

Editor: Audrey Kim

Sources:

http://www.latimes.com/science/sciencenow/la-sci-sn-sitting-brain-memory-20180413-story.html

https://www.sciencedaily.com/releases/2018/04/180412141014.htm

ALUMNUS FEATURE: Courtney Bayruns

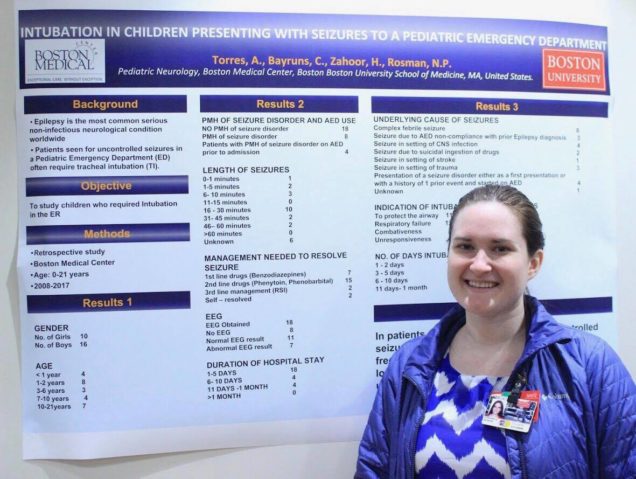

On April 9, 2018, Boston University School of Medicine (BUSM) hosted its Fourth Annual Carlos S. Kase Neurology Research Symposium where medical students, residents, and fellows had the opportunity to present their clinical research. This is one of the many events hosted by both BUSM and Boston Medical Center (BMC) that welcomes undergraduate students interested in neuroscience and clinical research to attend and gain further insight into the field.

A large exhibit hall decorated with scientific posters is filled with curious students flocking around each presenter. Passionate discussions about new discoveries within the field of neurological disorders research echoes throughout the room. Attendees gather as Courtney Bayruns, a third-year medical student, passionately shares her research on intubation rates in pediatric emergency department patients suffering from seizures. Bayruns eagerly shares her neuroscience journey, which began in the undergraduate neuroscience program at Boston University.

“Say hello to Dr. Lipton for me!” Bayruns enthusiastically asks as she recollects memories from her college years. She graduated in 2015 with a dual degree in Human Physiology and Neuroscience. Bayruns became interested in research during her freshman year while part of the Howard Hughes Medical Institute (HHMI) program and sophomore through senior year when she was funded by the Undergraduate Research Opportunities Program (UROP). During her years as an undergraduate student, Bayruns developed research-oriented skills through the laboratory sections offered in NE203, Principles of Neuroscience, with Dr. Shoai Hattori and other neuroscience courses. “Neuroscience labs in class help build foundational skills like micropipetting and microscopy, which are needed in research,” Bayruns said. “A lot of labs really appreciate the fact that we already have experience with these skills thanks to these classes.” Bayruns particularly enjoyed NE535, Translational Research in Alzheimer’s Disease, with Dr. Lucia Pastorino, because of its application of research in real-world scenarios.

Bayruns is currently finishing her third year of medical school and focusing on pediatric neurology research. At the symposium, she presents her most recent project: Intubation in Pediatric Patients Presenting with Seizures to the Emergency Department. This retrospective study uses BMC patient charts to consolidate data that examines ways to prevent pediatric status epilepticus and adverse outcomes of seizing kids in the Emergency Department. One aspect of Bayruns and her team’s research examines unnecessary intubation rates in pediatric seizure patients. During intubation, a physician inserts a breathing tube into the patient, protecting their airway and providing artificial ventilation. It is thought that intubation rates may increase in the presence of less experienced physicians, but the data is still preliminary. Some children involved in these cases experience febrile seizures caused by fevers and often lack the need for intubation, but receive the intervention nonetheless. Like with most clinical research, more data must be extracted and analyzed until conclusive trends are identified. This analysis will include many patient factors such as age, seizure duration, antiepileptic drugs used, and intubation among others. Her work plans to optimize pediatric seizure preventative care as well as the management of seizing kids in the Emergency Department.

Her undergraduate program, neuroscience labs, and research experiences shaped Bayruns into the person that she is today. “I felt a huge advantage compared to some of my classmates during my neuroscience units in medical school because my major covered the topic in such detail.” She also learned that perseverance is key when trying to enter the realm of research. “Just keep emailing,” Bayruns says when asked how to find a research opportunity. “You may get rejected a bunch of times, but eventually you’ll get a response from a lab you love.” She urges undergraduate students to get involved in research but says that it is okay if you do not start early. “Take your time and be sure to enjoy your undergraduate years.” She hopes to continue her neuroscience journey by attending a pediatric residency and treating children impacted by neurological disorders.

Bayruns still cherishes the memories and experiences that she made in college. “Dr. Lipton and the neuroscience department do a great job at preparing everyone for medical research and research-based jobs.” She also encourages undergraduate students to look into the field of neurology. “You never know,” she playfully jokes, “maybe one day I’ll mentor you as your attending!”

Check out the BUSM Calendar page for similar events to attend: https://www.bumc.bu.edu/busm/calendar/

Writers: Enzo Plaitano, Yoana Grigorova, and Yasmine Sami

Editors: Stephanie Gonzalez and Emme Enojado

Overactivity in Autism

Autism is a developmental disorder that often subjugates an individual to social difficulties. Symptoms of autism include impaired social, communication, and behavioral skills. When an individual has these traits, it can be difficult for the person and the people around them to engage in social interactions. Individuals diagnosed with autism may lack the assistance that they need, due in part to the stigma that surrounds the disorder. Though the causes for the disorder are unknown, evidence suggests that certain genetic factors may play an important role. Autism has been linked to brain regions such as the cerebellum, cerebral cortex, limbic system, corpus callosum, basal ganglia, and brainstem. With this, it seems like we may know the location and symptoms of autism, but do not exactly understand the causes that may lead to its development.

Recently, a Northwestern Medicine lab has begun research on the genetic factors linking autism to epilepsy. They have found that a mutation in a certain major gene, catnap2, which is typically linked to autism, causes seizures. With this mutation, the inhibitory neurons shrink and become unable to deliver messages effectively. In association with another mutation, CASK, individuals experience a mental impairment as well. With this discovery, many new therapies can be focused on these mutations. New studies can also be conducted to explore whether preventing this mutation may also prevent epilepsy. Currently the lab is screening molecules to identify effective drugs that might prevent these genetic abnormalities. Hopefully with these new discoveries, the mysteries of autism can be uncovered and the stigma can be removed from the disorder as a whole.

Writer: Albert Wang

Editor: James Kunstle

Sources:

https://www.nature.com/articles/s41380-018-0027-3

https://www.psychologytoday.com/us/conditions/autism-spectrum-disorder

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1350917/

https://iancommunity.org/ssc/autism-stigma

STUDENT FEATURE: Erin Ferguson

When the hours of the day are absent of sunlight and captured by endless thoughts of the mind, two roommates play a game of imagination, turning back time and drawing up different concepts of who they would be had they gone to another school. But for senior Erin Ferguson, imagining herself anywhere but Boston University would be unthinkable.

“My favorite part about BU is the neuroscience community,” Ferguson said. “I cannot put into words how much it has changed my life.” As the president of the Mind and Brain Society (MBS), a club open to all undergraduate students interested in neuroscience, and Editor-in-Chief of The Nerve, a student-run journal featuring articles on neuroscience topics, Ferguson is no stranger to 2 Cummington Mall, home of the Undergraduate Program in Neuroscience. At BU, she found herself, and who she is now is nowhere near who she was as a freshman. “I was really shy,” Ferguson said. “I was very reserved, would never talk, and was voted biggest nerd in my high school. Now, I’m involved with leadership, something I would have never, ever, ever considered four years ago.”

Although she lived in England for the first three years of her life, she was raised in the San Francisco Bay Area. Due to the region’s specialty in science and technology, her school strongly encouraged students, especially girls, to pursue careers in STEM fields. Inspired by a family history of nervous system related disorders, she came to BU knowing that she wanted to study neuroscience, and was academically satisfied her first semester. But Ferguson, 3,000 miles away from home and adjusting to college life, also found herself very overwhelmed. “I was in a rut,” Ferguson said. “I was used to being at the top of my class, and then I got here, and it was like whoa, this is really hard.”

She immersed herself in various student organizations, such as the campus orchestra and Global App Initiative, but none of them genuinely interested her. As soon as Ferguson traded her time for activities she was actually passionate about, such as MBS, she was a lot happier with her life. “My first year was basically a whole learning experience, in many different realms,” Ferguson said. “I had to learn how to study academically, how to take care of myself, and so on. It was just a trial period for college. As for the classes, they don’t get easier, but you get stronger.”

Up until sophomore year, Ferguson had medical school in mind. But after passing out at Student Health Services while they were taking her blood, she knew she needed to change paths. “The nurse literally laughed at me and was like ‘I don’t know how you would ever think that you could be a doctor if you can’t do needles or blood,’” she recalled. For a split of a second and in a moment of crisis, Ferguson planned on leaving the neuroscience major to transfer into the School of Education. At the time, she was a peer mentor for FY101, a seminar-style course for new students at BU, and a learning assistant for NE 101: Introduction to Neuroscience, and figured since she enjoyed the two that she would become a science teacher. But when she sat down with her advisor and Assistant Director for the Undergraduate Program in Neuroscience, Shoai Hattori, he opened her eyes to a different path.

“When I told Shoai my plan, he was basically like ‘Erin, no. You’ve told me so much about how you care about medicine and health. Actually, I think that public health might be a good fit for you,’” Ferguson said. “At the time, I had absolutely no idea what public health was. So I just kind of smiled and nodded as he told me about the 4+1 MPH program, and I basically applied because Shoai told me to, and he turned out to be completely right about it.” The program offered jointly by the College of Arts & Sciences (CAS) and the School of Public Health (SPH) allows highly motivated and curious students to earn their Bachelor of Arts and Masters in Public Health in five years, rather than the typical five-and-a-half years. She was admitted into the program her junior year, began taking courses at SPH her senior year, and is concentrating in epidemiology and biostatistics. The program has helped her uncover and define passions she never knew she had before and is now especially interested in where public health and neuroscience intersect.

Ferguson’s success isn’t limited to the classroom. During the academic years and summers, she expands her neuroscience knowledge and curiosity through working in various research laboratories. These include analyzing data in a clinical research trial investigating the effects of chronic oxytocin on the negative symptoms of schizophrenia at the Bonding and Attunement in Neuropsychiatric Disorders (BAND) Lab, conducting experiments on drug tolerance to cannabinoids in mice at Penn State College of Medicine, and interning at the Center for Autism Research Excellence (CARE). Her two years at CARE led her to complete a directed study in Fall 2016, receiving a Spring 2017 Student Stipend Award, and conducting an independent senior honors thesis on the association between lip-reading ability and neural correlates of face processing. “What I really like about research here is that they encourage you to do other things if you want to, allowing you to dip your toes into other things,” Ferguson said. “It was hard at first because I got started my sophomore year, and it was difficult for me to tune out all the people who did it their freshman year and were really competitive. You just have to tune out what other people are doing and do your own thing, and that was very hard for me to learn.”

Ferguson doesn’t know exactly why, but to her, neuroscience is a very different community. “Maybe it’s because it’s smaller, or the fact that we have great advisors who had always believed in me when I did not believe in myself, but it’s a whole different vibe than other majors that I’ve become acquainted with,” Ferguson said. “I also think it’s cool because people don’t know much about it. When you study physics, biology, and chemistry, it’s already laid out. But with neuroscience, we all have different interests in what hasn’t been discovered.”

After four years growing and changing alongside friends and faculty on Commonwealth Avenue and beyond, Ferguson has learned that at times, it’s okay to not be okay. “It’s okay to not have anything figured out and to be in this weird limbo period where you don’t know what’s going on at all- just because you don’t know what you’re doing does not mean your life is going to fall apart,” Ferguson said. “Just trust your gut, do what you love, and don’t be afraid to rely on other people. You can’t do this by yourself.”

Writer: Emme Enojado

Editor: Enzo Plaitano

Does language influence the way we think about time?

Time, as abstract as it is, is a crucial part of everyday life. Like other fundamental domains of experience, the idea of time is strongly associated with our brains. We use language all the time to express ourselves, but does language also shape how we see the world by influencing our concept of time?

According to studies conducted by Lera Boroditsky, a cognitive science professor at the University of California, San Diego, the concept of time does differ dramatically across languages. She found that people all over the world share a common trait despite speaking very differently: relying on space to organize time. For example, time naturally flows from left to right for English speakers, who read from left to right. However, Arabic speakers, who write from right to left, tend to organize stories from right to left. In Pormpuraaw, a remote Australian Aboriginal community, people don’t use the words “left” or “right” at all. Instead, they use “north,” “south,” “east,” and “west.” Consequently, Pormpuraawans represent time from east to west and think about time in unique ways: they would lay out a story from left to right when they are facing south, from right to left when facing north, toward the body when facing east, and away from the body when facing west.

A 2001 paper by Boroditsky shows that Mandarin speakers arrange time both horizontally, like English speakers, and vertically. In Mandarin, the words “up” and “down” are often used to describe earlier and later events, respectively. As a result, Mandarin speakers in the experiment were faster to verify that “March comes earlier than April” when given vertical primes than they were when given horizontal primes.

What if you are bilingual? According to Boroditsky, one possibility is that you have a different mind for each language and switch from one to the other. On the other hand, you could argue that your mind is fully integrated. The answer, in fact, lies somewhere in between. Bilinguals never “turn off’ a language; the language they are speaking in just becomes more active.

Writer: Zijing Sang

Editor: Sophia Hon

Sources:

http://journals.sagepub.com/doi/pdf/10.1177/0956797610386621