Research

Safe, Secure and Robust AI-Enabled Systems

Artificial Intelligence (A.I.) is on the verge of ubiquity. A.I. might soon replace humans as the decision maker in many application domains ranging from driving a car to making medical diagnosis. On the other hand, A.I. systems are also becoming increasing complex, and it is quite daunting that we are going to deploy these systems without a solid understanding of the complexities and their implications: why did the machine pick this decision? why not something else? when does it fail? how can we trust the machine if we don’t fully understand it? The somewhat dismal status quo is that A.I. is often used as the silver bullet or some magic black box in many applications. In this project, we will develop a combination of machine learning and computational proof techniques to (im)prove A.I. safety, explain A.I. decisions, guard against maliciously manipulated AI systems, enhance these systems’ robustness, ensure fairness, and ultimately make A.I. systems more trustworthy.

Students: Weichao Zhou, Jiameng Fan, Panagiota Kiourti, Feisi Fu

Relevant publications:

- REGLO: Provable Neural Network Repair for Global Robustness Properties

Feisi Fu, Zhilu Wang, Jiameng Fan, Yixuan Wang, Chao Huang, Qi Zhu, Xin Chen and Wenchao Li.

NeurIPS Workshop on Trustworthy and Socially Responsible Machine Learning (TSRML), 2022. - POLAR: A Polynomial Arithmetic Framework for Verifying Neural-Network Controlled Systems

Chao Huang, Jiameng Fan, Xin Chen, Wenchao Li and Qi Zhu.

The 20th International Symposium on Automated Technology for Verification and Analysis (ATVA), 2022. [arXiv version] - DRIBO: Robust Deep Reinforcement Learning via Multi-View Information Bottleneck

Jiameng Fan and Wenchao Li.

The 39th International Conference on Machine Learning (ICML), 2022. [arXiv version] - Sound and Complete Neural Network Repair with Minimality and Locality Guarantees

Feisi Fu and Wenchao Li.

The 10th International Conference on Learning Representations (ICLR), 2022. [arXiv version] - MISA: Online Defense of Trojaned Models using Misattributions

Panagiota Kiourti, Wenchao Li, Anirban Roy, Karan Sikka and Susmit Jha.

Annual Computer Security Applications Conference (ACSAC), December 2021. - Adversarial Training and Provable Robustness: A Tale of Two Objectives

Jiameng Fan and Wenchao Li.

The 35th AAAI Conference on Artificial Intelligence (AAAI), February 2021. - Runtime-Safety-Guided Policy Repair

Weichao Zhou, Ruihan Gao, BaekGyu Kim, Eunsuk Kang and Wenchao Li.

The 20th International Conference on Runtime Verification (RV), October 2020. - Divide and Slide: Layer-Wise Refinement for Output Range Analysis of Deep Neural Networks

Chao Huang, Jiameng Fan, Xin Chen, Wenchao Li and Qi Zhu.

IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems (TCAD), Volume: 39, Issue: 11, PP. 3323 – 3335, November 2020. - ReachNN*: A Tool for Reachability Analysis of Neural-Network Controlled Systems

Jiameng Fan, Chao Huang, Xin Chen, Wenchao Li and Qi Zhu.

The 18th International Symposium on Automated Technology for Verification and Analysis (ATVA), October 2020. - TrojDRL: Evaluation of Backdoor Attacks on Deep Reinforcement Learning.

Panagiota Kiourti, Kacper Wardega, Susmit Jha and Wenchao Li.

ACM/EDAC/IEEE Design Automation Conference (DAC), July 2020. - Towards Verification-Aware Knowledge Distillation for Neural-Network Controlled Systems.

Jiameng Fan, Chao Huang, Wenchao Li, Xin Chen and Qi Zhu.

International Conference on Computer Aided Design (ICCAD), November 2019. - ReachNN: Reachability Analysis of Neural-Network Controlled Systems.

Chao Huang, Jiameng Fan, Wenchao Li, Xin Chen and Qi Zhu.

International Conference on Embedded Software (EMSOFT), October 2019. - Safety-Guided Deep Reinforcement Learning via Online Gaussian Process Estimation.

Jiameng Fan and Wenchao Li.

International Conference on Learning Representation (ICLR), Workshop on Safe Machine Learning: Specification, Robustness, and Assurance, May 2019. - Safety-Aware Apprenticeship Learning.

Weichao Zhou and Wenchao Li.

International Conference on Computer Aided Verification (CAV), July 2018.

Programmatic and Automata-based Reward Design

Reward design is a fundamental problem in reinforcement learning (RL). A misspecified or poorly designed reward can result in low sample efficiency and undesired behaviors. We propose to use programs and (symbolic) automata to specify rewards for RL problems. These formalisms allow human engineers to express sub-goals and complex task scenarios in a structured and interpretable way. We also propose new algorithms to infer the most appropriate reward assignments based on expert demonstrations.

Students: Weichao Zhou

Relevant publications:

- A Hierarchical Bayesian Approach to Inverse Reinforcement Learning with Symbolic Reward Machines

Weichao Zhou and Wenchao Li. [arXiv version]

The 39th International Conference on Machine Learning (ICML), 2022. - Programmatic Reward Design by Example

Weichao Zhou and Wenchao Li.

The 36th AAAI Conference on Artificial Intelligence (AAAI), 2022.

Secure Multi-Robot Systems

Recent trends in industrial automation indicate an ever-increasing adoption of autonomous mobile robots in production systems (the Kiva system in Amazon’s warehouses is a prime and large-scale example). In parallel, there is also an accelerating trend in large scale hacking events directed against high-profile companies (Equifax, Target, Walmart, Yahoo, Adobe, etc.) that have resulted in serious security breaches. Until now, these breaches have been limited to data, but it is easy to imagine that similar incidents in the near feature could take advantage of the increasing amount of automation to expand the attack reach to physical damages. It is therefore of critical importance to devise strategies that can preemptively address these threats. In this project, we propose a novel cyber-physical framework that uses planning and physical measurements to improve the security of networks of advanced robots against malicious cyber attacks that can compromise individual robots and sabotage the task of the group. This is a collaborative project funded by NSF.

Student: Kacper Wardega

Relevant publications:

- Byzantine Resilience at Swarm Scale: A Decentralized Blocklist from Inter-robot Accusations

Kacper Wardega, Max von Hippel, Roberto Tron, Cristina Nita-Rotaru and Wenchao Li.

The 22nd International Conference on Autonomous Agents and Multiagent Systems (AAMAS), 2023. - HoLA Robots: Mitigating Plan-Deviation Attacks in Multi-Robot Systems with Co-Observations and Horizon-Limiting Announcements

Kacper Wardega, Max von Hippel, Roberto Tron, Cristina Nita-Rotaru and Wenchao Li.

The 22nd International Conference on Autonomous Agents and Multiagent Systems (AAMAS), 2023. - Resilience of Multi-Robot Systems to Physical Masquerade Attacks

Kacper Wardega, Roberto Tron and Wenchao Li

IEEE Workshop on the Internet of Safe Things (SafeThings), May 2019. - Masquerade Attack Detection Through Observation Planning for Multi-Robot Systems

Kacper Wardega, Roberto Tron and Wenchao Li

International Conference on Autonomous Agents and Multiagent Systems (AAMAS), May 2019. (extended abstract)

Control and Coordination of Connected and Automated Vehicles

Connected and automated vehicles (CAVs) hold the promise of resolving long-lasting problems in transportation networks such as accidents, congestion, unsustainable energy consumption and environmental pollution. In this project, we aim to develop secure and robust control and coordination schemes for CAVs.

Students: H M Sabbir Ahmad

Relevant publications:

- Merging Control in Mixed Traffic with Safety Guarantees: A Safe Sequencing Policy with Optimal Motion Control

Ehsan Sabouni, H M Sabbir Ahmad, Wei Xiao, Christos G. Cassandras and Wenchao Li.

The 26th IEEE International Conference on Intelligent Transportation Systems (ITSC), 2023.

- Trust-Aware Resilient Control and Coordination of Connected and Automated Vehicles

H M Sabbir Ahmad, Ehsan Sabouni, Wei Xiao, Christos G. Cassandras and Wenchao Li.

The 26th IEEE International Conference on Intelligent Transportation Systems (ITSC), 2023.

- Optimal Control of Connected Automated Vehicles with Event-Triggered Control Barrier Functions: a Test Bed for Safe Optimal Merging

Ehsan Sabouni*, H M Sabbir Ahmad*, Wei Xiao, Christos G. Cassandras and Wenchao Li (* indicating joint first-authors)

The 7th IEEE Conference on Control Technology and Applications (CCTA), 2023 (nominated for best paper). - Evaluations of Cyber Attacks on Cooperative Control of Connected and Autonomous Vehicles at Bottleneck Points

H M Sabbir Ahmad, Ehsan Sabouni, Wei Xiao, Christos G. Cassandras, Wenchao Li.

ISOC Symposium on Vehicle Security and Privacy (VehicleSec), 2023.

Extensibility-Driven Design of Cyber-Physical Systems

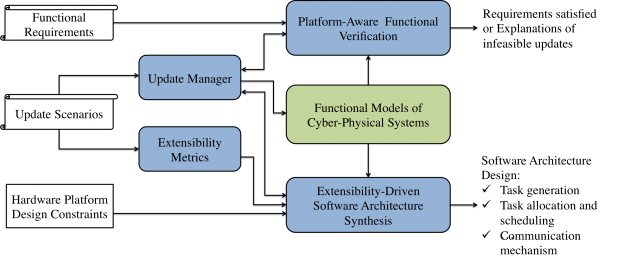

A longstanding problem in the design of CPSs is the inability and ineffectiveness in coping with software and hardware evolutions over the lifetime of a design or across multiple versions in the same product family. For example, Boeing 777 still uses the discontinued AMD 2950-microprocessor running a MS DOS file system for its Information Management System and Air Data Inertial Reference System. A fundamental reason for this is that small changes in resource usage can cause big and unexpected changes in timing and ultimately affect the functionality of the design. Engineers need to ensure that any changes not only (1) meet the constraints of the embedded platform such as schedulability and power constraints, but also (2) preserve the correctness of functional properties, many of which are affected by the platform changes. Systems that are designed without future changes in mind often incur significant redesign and re-verification cost, and reduced system availability and reliability. In this project, we treat extensibility as a first-class design objective, and aim to develop an extensibility-driven design (EDD) flow where different models and metrics of extensibility are considered jointly with other objectives at design time, further supported by new synthesis and verification tools that can reason about design updates efficiently. This is a collaborative project funded by NSF.

Students: Kacper Wardega

Relevant publications:

- Cross-Layer Adaptation with Safety-Assured Proactive Task Job Skipping

Zhilu Wang, Chao Huang, Hyoseung Kim, Wenchao Li and Qi Zhu.

ACM Transactions on Embedded Computing Systems (TECS), 2021. - Know the Unknowns: Addressing Disturbances and Uncertainties in Autonomous Systems

Qi Zhu, Wenchao Li, Hyoseung Kim, Yecheng Xiang, Kacper Wardega, Zhilu Wang, Yixuan Wang, Hengyi Liang, Chao Huang, Jiameng Fan and Hyunjong Choi.

International Conference on Computer Aided Design (ICCAD), November 2020. - Opportunistic Intermittent Control with Safety Guarantees for Autonomous Systems.

Chao Huang, Shichao Xu, Zhilu Wang, Shuyue Lan, Wenchao Li and Qi Zhu.

ACM/IEEE Design Automation Conference (DAC), July 2020. - Formal Verification of Weakly-Hard Systems

Chao Huang, Wenchao Li and Qi Zhu.

International Conference on Hybrid Systems: Computation and Control (HSCC), April 2019. - Exploring Weakly-hard Paradigm for Networked Systems

Chao Huang, Kacper Wardega, Wenchao Li and Qi Zhu.

Workshop on Design Automation for CPS and IoT (DESTION), April 2019. - Extensibility-Driven Automotive In-Vehicle Architecture Design.

Qi Zhu, Hengyi Liang, Licong Zhang, Debayan Roy, Wenchao Li and Samarjit Chakraborty.

ACM/IEEE Design Automation Conference (DAC), June 2017. - Delay-Aware Design, Analysis and Verification of Intelligent Intersection Management.

Bowen Zheng, Chung-Wei Lin, Hengyi Liang, Shinichi Shiraishi, Wenchao Li and Qi Zhu.

IEEE International Conference on Smart Computing (SMARTCOMP), May 2017. - Design Automation of Cyber-Physical Systems: Challenges, Advances, and Opportunities.

Sanjit A. Seshia, Shiyan Hu, Wenchao Li and Qi Zhu.

IEEE Transactions on Computer-Aided Design of Circuits and Systems (TCAD), 2017.

Reliable Communication with Distributed, Low-Power Devices

Energy-harvesting wireless sensor nodes have found widespread adoption due to their low cost and small form factor. However, uncertainty in the available power supply introduces significant challenges in engineering communications between intermittently-powered nodes. We develop new algorithms to ensure reliable communication for networks built using these low-power devices.

Students: Kacper Wardega

Relevant publications:

- Opportunistic Communication with Latency Guarantees for Intermittently-Powered Devices

Kacper Wardega, Wenchao Li, Hyoseung Kim, Yawen Wu, Zhenge Jia and Jingtong Hu.

Design, Automation and Test in Europe Conference (DATE), 2022. - Application-Aware Scheduling of Networked Applications over the Low-Power Wireless Bus.

Kacper Wardega and Wenchao Li.

Design, Automation and Test in Europe Conference (DATE), March 2020.