Research

Funded Research Projects

Neural bases of phonological working memory in developmental language disorders (NIH DC014045)

Phonological working memory is the mechanism by which language-relevant sounds are maintained in short- term memory. Phonological working memory is a core construct in cognitive, developmental, and clinical neuropsychology, as well as speech-language pathology, and plays an important role in language acquisition, vocabulary development, learning to read, and language comprehension. Phonological working memory deficits are characteristic of numerous developmental disorders of communication, including developmental language disorder (specific language impairment), developmental reading disorder (dyslexia), autism, and Down syndrome. Even in adulthood, phonological working memory deficits remain deleterious to the language abilities and psychosocial functioning of individuals with developmental language disorders. However, there has been no prior attempt to investigate the brain bases of phonological working memory using tasks analogous to clinically sensitive measures such as nonword repetition. Moreover, virtually nothing is known about how phonological working memory impairments in individuals with developmental language disorders arise from differences in neurophysiology or neuroanatomy. Correspondingly, the first goal of this project is to deter- mine the normative neural systems recruited by clinical phonological working memory assessments in the brains of adults with typical language abilities (Aim 1). Critically, we will ascertain whether the brain bases of phonological working memory are related to neural systems underlying domain-specific linguistic processing or domain-general cognitive abilities. This will help reconcile two competing theories about how phonological working memory works in the brain, as well as inform the clinical interpretation of nonword repetition tests. The second goal of this project is to examine phonological working memory in the brains of adults with persistent developmental language disorder, including how they differ from controls and how individual variability in neural responses is related to behavioral differences in language ability (Aim 2). Finally, we will collect pilot data from a smaller sample of children with developmental language disorder in support of future research aimed at understanding brain differences in children with developmental language disorders.

Cortical development and neuroanatomical anomalies in developmental dyslexia (NIH HD096098)

The long-term objectives of this project are to identify whether there are any structural differences that distinguish the brains of children or adults with developmental reading disorders (dyslexia) and how these differences correspond to behavioral heterogeneity in reading impairment across development. Dyslexia is a neurodevelopmental disorder that specifically and often profoundly impairs the ability to acquire accurate and fluent reading skills. However, decades of research have failed to converge on one or more signature differences in the brains of children or adults with dyslexia that reliably, sensitively, or specifically distinguish impaired versus typical reading development. Drawing from numerous independent datasets collected by the investigators’ labs over the past decade, this project will assemble and analyze the largest sample of structural brain scans ever studied from children, adolescents, and adults with developmental dyslexia and typical reading development. This combined dataset includes over 1200 structural brain scans obtained from individuals between the ages of 4 and 40 years old, for whom extensive reading and cognitive assessments were performed, and approximately 50% of whom have dyslexia. This dataset will be used to address two aims: In Aim 1, this study will explore whether structural brain features differ between individuals with and without dyslexia. Features to be studied include (1) macroanatomical morphology of the temporal lobe, such as patterns of Heschl’s gyrus reduplication or planum temporale lateralization; (2) regional morphometric differences in gray or white matter volumes or their lateralization; and (3) geometric differences in cortical thickness, curvature, and surface area. Variation in these features will also be analyzed in the context of component reading skills, such as phonological awareness and rapid naming. In Aim 2, this study will use the processed dataset to build a model of the developmental trajectory of brain maturation in dyslexia compared to typical reading. This model will be used to evaluate current theories about developmental versus endogenous factors affecting reading impairment. Identifying the neuroanatomical substrate or substrates that are related to reading impairment, and how these change across development, is a key step in understanding the biological basis of dyslexia. A stronger scientific understanding of the causes and prognosis of developmental reading impairments will build a foundation upon which diagnoses can be obtained both earlier and with greater specificity, and upon which behavioral interventions can be deployed more efficaciously, ultimately to ensure that all children have the chance to achieve their full potential for healthy and productive lives.

Major Research Areas

Auditory Plasticity and Auditory Expertise

We want to understand brain plasticity. How does the brain change as a result of its experiences? The brain can change how it processes information very fast. We want to understand how these processes work and how they support speech perception, reading, and language comprehension. Can we harness the power of brain plasticity to help people who struggle with reading development or language learning? Can we understand the difference between successful and less-successful learners through the structure and function of their brains?

We want to understand brain plasticity. How does the brain change as a result of its experiences? The brain can change how it processes information very fast. We want to understand how these processes work and how they support speech perception, reading, and language comprehension. Can we harness the power of brain plasticity to help people who struggle with reading development or language learning? Can we understand the difference between successful and less-successful learners through the structure and function of their brains?

Phonetic Variability in Speech Perception

We want to understand the cognitive consequences of phonetic variability in speech perception. When we listen to speech, we almost never hear the exact same stimulus more than once. Myriad factors combine to render the speech signal both immensely complex and immensely variable. Idiosyncratic anatomical, physiological, and cultural differences render the phonetic realization of speech different from person to person. The same words spoken by a single individual will differ immensely in their phonetics based on context, environment, audience, etc. All of this variability presents a challenge for the neural systems processing speech: How do we recognize consistent messages in the presence of varying phonetics? Interestingly, some research also suggests that experiencing all this variability is a crucial part of language learning. We want to understand how and when variability sometimes facilitates and sometimes complicates speech perception.

We want to understand the cognitive consequences of phonetic variability in speech perception. When we listen to speech, we almost never hear the exact same stimulus more than once. Myriad factors combine to render the speech signal both immensely complex and immensely variable. Idiosyncratic anatomical, physiological, and cultural differences render the phonetic realization of speech different from person to person. The same words spoken by a single individual will differ immensely in their phonetics based on context, environment, audience, etc. All of this variability presents a challenge for the neural systems processing speech: How do we recognize consistent messages in the presence of varying phonetics? Interestingly, some research also suggests that experiencing all this variability is a crucial part of language learning. We want to understand how and when variability sometimes facilitates and sometimes complicates speech perception.

[/collapsible]

Language and Reading Development and Disorders

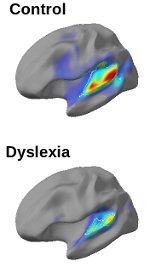

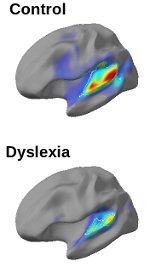

We want to understand what changes occur in the brain during the acquisition of language and literacy, and how these processes differ for individuals who struggle to develop typical reading or language abilities. Between 5-15% of children struggle to develop typical reading abilities, the hallmark of a disorder known as developmental dyslexia. Scientific research, including work from our laboratory, has strongly suggested that the underlying difficulty in dyslexia stems from a difference in how these individuals’ brains represent and process the sounds of language. Our research attempts to identify what exactly the source of this difference is. Is there something distinct about the processes underlying either rapid or long-term auditory plasticity in the brains of individuals with dyslexia? If the source of reading difficulty is in representing and processing the sounds of language, why do individuals with dyslexia struggle to learn to read, but not struggle to learn to understand speech? Using advanced brain imaging and neuromodulation techniques, can we develop quantitative biomarkers for the diagnosis or remediation of developmental communication disorders like dyslexia?

We want to understand what changes occur in the brain during the acquisition of language and literacy, and how these processes differ for individuals who struggle to develop typical reading or language abilities. Between 5-15% of children struggle to develop typical reading abilities, the hallmark of a disorder known as developmental dyslexia. Scientific research, including work from our laboratory, has strongly suggested that the underlying difficulty in dyslexia stems from a difference in how these individuals’ brains represent and process the sounds of language. Our research attempts to identify what exactly the source of this difference is. Is there something distinct about the processes underlying either rapid or long-term auditory plasticity in the brains of individuals with dyslexia? If the source of reading difficulty is in representing and processing the sounds of language, why do individuals with dyslexia struggle to learn to read, but not struggle to learn to understand speech? Using advanced brain imaging and neuromodulation techniques, can we develop quantitative biomarkers for the diagnosis or remediation of developmental communication disorders like dyslexia?

[/collapsible]

Voice Recognition and Talker Identification

We want to understand how people recognize talkers by the sound of their voice. Talker identification (voice recognition) is an important social auditory skill. When people are talking in a group, how do they keep track of who said what? How can you know who called your name before you see them? When you answer the phone, how can you tell who is on the line? Our research has shown that voice recognition interacts with language processing in interesting and complicated ways. People are more accurate at recognizing voices when they can understand the language being spoken, and have trouble recognizing voices when they can’t understand what is being said. Intriguingly, individuals with dyslexia don’t appear to experience this language familiarity effect in talker identification: Unlike their peers, individuals with dyslexia don’t get any benefit in voice recognition from understanding what is being said. Why is voice recognition impaired in dyslexia? What are the processes by which people learn, remember, and recognize voices? Is there anything special about familiar voices, like those of our friends, family, or famous actors? In what ways does voice recognition depend on language processing, and in what ways is it independent? Are there parts of the brain that care about voices without caring about speech?

We want to understand brain plasticity. How does the brain change as a result of its experiences? The brain can change how it processes information very fast. We want to understand how these processes work and how they support speech perception, reading, and language comprehension. Can we harness the power of brain plasticity to help people who struggle with reading development or language learning? Can we understand the difference between successful and less-successful learners through the structure and function of their brains?

We want to understand brain plasticity. How does the brain change as a result of its experiences? The brain can change how it processes information very fast. We want to understand how these processes work and how they support speech perception, reading, and language comprehension. Can we harness the power of brain plasticity to help people who struggle with reading development or language learning? Can we understand the difference between successful and less-successful learners through the structure and function of their brains? We want to understand the cognitive consequences of phonetic variability in speech perception. When we listen to speech, we almost never hear the exact same stimulus more than once. Myriad factors combine to render the speech signal both immensely complex and immensely variable. Idiosyncratic anatomical, physiological, and cultural differences render the phonetic realization of speech different from person to person. The same words spoken by a single individual will differ immensely in their phonetics based on context, environment, audience, etc. All of this variability presents a challenge for the neural systems processing speech: How do we recognize consistent messages in the presence of varying phonetics? Interestingly, some research also suggests that experiencing all this variability is a crucial part of language learning. We want to understand how and when variability sometimes facilitates and sometimes complicates speech perception.

We want to understand the cognitive consequences of phonetic variability in speech perception. When we listen to speech, we almost never hear the exact same stimulus more than once. Myriad factors combine to render the speech signal both immensely complex and immensely variable. Idiosyncratic anatomical, physiological, and cultural differences render the phonetic realization of speech different from person to person. The same words spoken by a single individual will differ immensely in their phonetics based on context, environment, audience, etc. All of this variability presents a challenge for the neural systems processing speech: How do we recognize consistent messages in the presence of varying phonetics? Interestingly, some research also suggests that experiencing all this variability is a crucial part of language learning. We want to understand how and when variability sometimes facilitates and sometimes complicates speech perception. We want to understand what changes occur in the brain during the acquisition of language and literacy, and how these processes differ for individuals who struggle to develop typical reading or language abilities. Between 5-15% of children struggle to develop typical reading abilities, the hallmark of a disorder known as developmental dyslexia. Scientific research, including work from our laboratory, has strongly suggested that the underlying difficulty in dyslexia stems from a difference in how these individuals’ brains represent and process the sounds of language. Our research attempts to identify what exactly the source of this difference is. Is there something distinct about the processes underlying either rapid or long-term auditory plasticity in the brains of individuals with dyslexia? If the source of reading difficulty is in representing and processing the sounds of language, why do individuals with dyslexia struggle to learn to read, but not struggle to learn to understand speech? Using advanced brain imaging and neuromodulation techniques, can we develop quantitative biomarkers for the diagnosis or remediation of developmental communication disorders like dyslexia?

We want to understand what changes occur in the brain during the acquisition of language and literacy, and how these processes differ for individuals who struggle to develop typical reading or language abilities. Between 5-15% of children struggle to develop typical reading abilities, the hallmark of a disorder known as developmental dyslexia. Scientific research, including work from our laboratory, has strongly suggested that the underlying difficulty in dyslexia stems from a difference in how these individuals’ brains represent and process the sounds of language. Our research attempts to identify what exactly the source of this difference is. Is there something distinct about the processes underlying either rapid or long-term auditory plasticity in the brains of individuals with dyslexia? If the source of reading difficulty is in representing and processing the sounds of language, why do individuals with dyslexia struggle to learn to read, but not struggle to learn to understand speech? Using advanced brain imaging and neuromodulation techniques, can we develop quantitative biomarkers for the diagnosis or remediation of developmental communication disorders like dyslexia?